How to Boost App Downloads by A/B Testing Icons

Key Takeaways

- A/B testing can significantly impact app downloads by determining the most effective icon design. The test involves comparing different variations of an app icon and measuring their success in terms of downloads.

- The design and color of an app icon can greatly influence user engagement and subsequent downloads. However, personal preferences or instincts about design can often be misleading, as proven by a case where the least favored design resulted in a 27.1% increase in downloads.

- A/B testing should be an ongoing process, even after identifying a successful design. Continual testing of new variations can further optimize app downloads and ensure that the design remains effective and appealing to the target audience.

How can you tell which app icon will result in the most app store downloads? Answer: simply by looking, you can’t. Even an experienced designer couldn’t answer this question with certainty, however, there is a solution — A/B testing.

A/B testing is not a new concept, however, when trying to increase downloads/conversions, many make the mistake of revamping the app’s interface, while neglecting the first thing users see ⏤ the app icon! Plus, all app stores have a dashboard where marketers can measure the success of the different tests.

Read on to find out how A/B testing works, and how it helped us increase our app store downloads by a whopping 34%.

We A/B Tested These 4 Icons

Begin by taking a closer look at these icons:

Can you guess which one resulted in the largest number of app store downloads? Spoiler: it’s #2. I’m going to show you how we reached that conclusion by A/B testing the different concepts.

The Problem

Piano Master 2 is an app where if you press a piano key, a small brick falls over it. By doing this, you play a previously chosen melody. It seems like a game, but it’s actually very useful to those who want to learn how to play a real piano.

This app was once unique, but now it has many rival applications. This is why our customer wanted to create a new icon so that the game could stand out among its competition.

Here’s what the app icon looked like before:

The customer filled out an application form and we agreed to design three unique icons for the Google Play store, and A/B test them to see which resulted in more app downloads.

Subsequently, we began by designing the first iterations of the three unique concepts. Let’s take a look at the brief.

The Project Brief

The first icon concept had to be serious and classical (two-dimensional piano keys with note signs falling over them). The other icons were to be bright, dynamic and somewhat abstract. Also, the customer wanted to have stars on at least one of the icon concepts (because you can see stars in the game when you play the piano). The customer sent us this image as a visual reference:

Step 1: Iteration and Feedback

We sent the customer these six drafts:

The customer liked concepts 5, 4 and 1 (in that order). The only concern was the green bar that we took directly from the game; the customer asked us to make the green bar a little narrower.

After taking the feedback on board, we then reiterated over the three chosen concepts and they began to look like this:

During our second round of feedback, the customer told us that the word “free” on one of the concepts was unnecessary, as the paid version of the app no longer existed, and only the free version remained. We were slightly worried that the experiment wouldn’t be fair if we tested the old icon with the word “free” against our new concept without it, however, the customer insisted that it be removed. The designs were then approved, and here’s what we ended up with after adding some color:

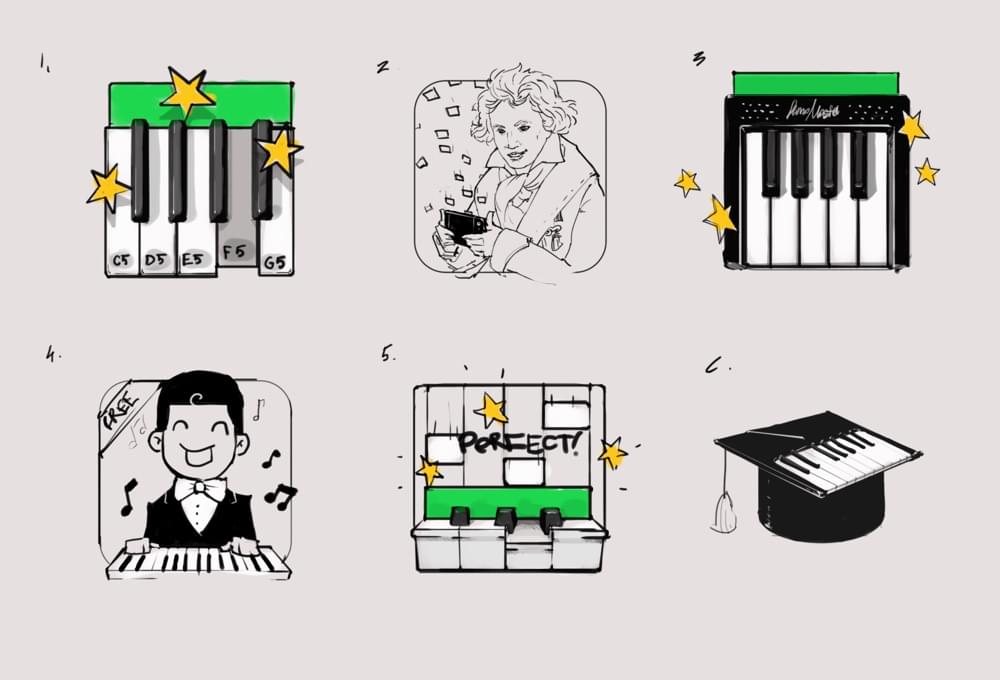

Step 2: First A/B Test (Choosing a Design)

We then tested the three concepts on the Google Play app store, where we realised that the winning icon increased the number of application downloads by 27.1%. Surprisingly, this was the concept that the customer liked the least!

From the graph above, taken from the client’s dashboard, we also deduced that the second-best performer was the original icon, and we had a sneaking suspicion that it was because of the word “free”. We moved onto our second A/B test nonetheless, putting this suspicion aside for now.

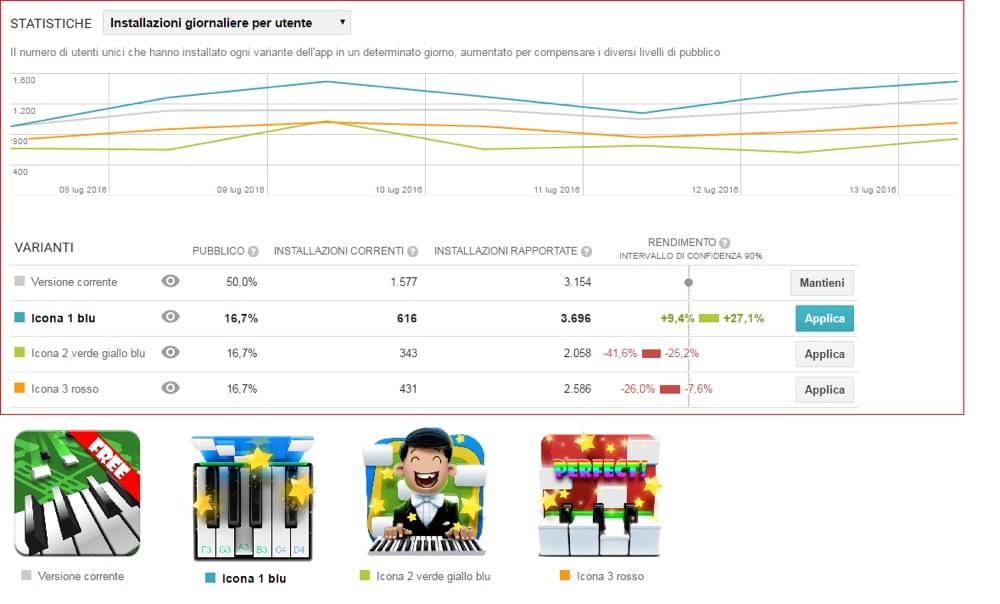

Step 3: Second A/B Test (Choosing a Color)

Now that the customer was no longer in doubt of which design to move forward with, we needed to choose a color for it. For the next experiment we A/B tested the winning concept with 3 color variations, where the customer expected the blue icon to be the winner, and since we still had nagging concerns about the impact of the word “free”, the customer agreed to include a fourth variation that was blue and had the “free” label.

Much to our surprise, the green (verde) icon without a “free” label took the lead. In fact, the icon with the label appeared to be the least impactful, making our assumptions very wrong (twice!).

Conclusion

The customer’s least favourite icon was the most successful icon, where the difference in conversions between the best and worst icon was fourfold — this means that had the customer chosen the icon they liked (without A/B testing the different concepts), they would have ended up with four times as less organic downloads when compared to the most successful concept!

So what does this tell us? It tells us that icon design can massively impact the number of app download, and that A/B testing is a terrific way to compare multiple variations of a design. A/B testing can be applied to literally any design workflow, so next time you need to compare/decide upon various concepts, don’t choose your favourite idea based on zero reasoning, use A/B testing to deliver bulletproof evidence!

Plus, as we’ve seen today, your instincts can deceive you!

Frequently Asked Questions (FAQs) on Boosting App Downloads by AB Testing Icons

What is AB testing in the context of app icons and how does it work?

AB testing, also known as split testing, is a method of comparing two versions of an app icon to determine which one performs better. It involves showing the two variants (A and B) to similar visitors at the same time. The one that gives a better conversion rate, in this case, more app downloads, wins. The process involves statistical analysis of the results to determine if the difference in performance was significant or just due to chance.

How can AB testing improve my app downloads?

AB testing allows you to make more out of your existing traffic. While the cost of acquiring new users can be high, AB testing allows you to increase your app downloads without having to spend on marketing. By testing different icons, you can identify which one appeals more to your target audience, leading to more downloads.

What elements of an app icon should I test?

You can test various elements of an app icon including color, shape, imagery, and size. For instance, you can test whether a flat design or a 3D design gets more downloads. You can also test different color combinations to see which one is more appealing to your target audience.

How do I conduct an AB test for my app icon?

To conduct an AB test, you need to create two different versions of your app icon. Then, using an AB testing tool, you can show the two versions to your target audience. The tool will then collect data on which version gets more downloads. After a significant amount of data has been collected, you can analyze the results to determine which icon performed better.

How long should I run an AB test?

The duration of an AB test can vary depending on the volume of your traffic and the difference in performance between the two versions. However, it’s recommended to run the test for at least two weeks to ensure that you have enough data for a reliable result.

Can I test more than two versions of an app icon?

Yes, you can test more than two versions of an app icon. This is known as multivariate testing. However, keep in mind that the more versions you test, the more traffic you need to get reliable results.

What should I do after an AB test?

After an AB test, analyze the results to understand which version of your app icon performed better. If there’s a clear winner, consider implementing that design. However, AB testing should be an ongoing process. Even if you find a winner, continue testing new variations to optimize your app downloads.

Can AB testing negatively impact my app downloads?

If done correctly, AB testing should not negatively impact your app downloads. However, if you implement a version that performs worse based on unreliable results, it could lead to fewer downloads. That’s why it’s important to run the test for a sufficient duration and analyze the results carefully.

Do I need any technical skills to conduct an AB test?

While understanding the basics of AB testing can be helpful, you don’t necessarily need any technical skills. There are various AB testing tools available that make the process easy and straightforward.

Can I AB test other elements of my app?

Yes, besides the app icon, you can also AB test other elements of your app such as the app description, screenshots, and even the app interface. This can help you optimize the overall user experience and increase your app downloads.

Roman the CEO of IconDesignLAB and an entrepreneur with experience in icon design, web, UX/UI and mobile spheres, in addition to having 12 years of experience in e-commerce, e-marketing, software development and software sales.

Published in

·Android·App Development·iOS·Mobile·Mobile Web Development·Tools & Libraries·August 7, 2015