Data Structures for PHP Devs: Stacks and Queues

A data structure, or abstract data type (ADT), is a model that is defined by a collection of operations that can be performed on itself and is limited by the constraints on the effects of those operations. It creates a wall between what can be done to the underlying data and how it is to be done.

Most of us are familiar with stacks and queues in normal everyday usage, but what do supermarket queues and vending machines have to do with data structures? Let’s find out. In this article I’ll introduce you to two basic abstract data types – stack and queue – which have their origins in everyday usage.

Key Takeaways

- Abstract Data Types (ADTs) are models defined by a set of operations that can be performed on them. Stacks and queues are basic ADTs with origins in everyday usage. In computer science, a stack is a sequential collection where the last object placed is the first removed (LIFO), while a queue operates on a first-in, first-out basis (FIFO).

- A stack can be implemented using arrays, as they already provide push and pop operations. The basic operations defining a stack include init (create the stack), push (add an item to the top), pop (remove the last item added), top (look at the item on top without removing it), and isEmpty (return whether the stack contains no more items).

- The SPL extension in PHP provides a set of standard data structures, including the SplStack class. The SplStack class, implemented as a doubly-linked list, provides the capacity to implement a traversable stack. The ReadingList class, implemented as an SplStack, can traverse the stack forward (top-down) and backward (bottom-up).

- A queue, another abstract data type, operates on a first-in, first-out basis (FIFO). The basic operations defining a queue include init (create the queue), enqueue (add an item to the end), dequeue (remove an item from the front), and isEmpty (return whether the queue contains no more items). The SplQueue class in PHP, also implemented using a doubly-linked list, allows for the implementation of a queue.

Stacks

In common usage, a stack is a pile of objects which are typically arranged in layers – for example, a stack of books on your desk, or a stack of trays in the school cafeteria. In computer science parlance, a stack is a sequential collection with a particular property, in that, the last object placed on the stack, will be the first object removed. This property is commonly referred to as last in first out, or LIFO. Candy, chip, and cigarette vending machines operate on the same principle; the last item loaded in the rack is dispensed first.

In abstract terms, a stack is a linear list of items in which all additions to (a “push”) and deletions from (a “pop”) the list are restricted to one end – defined as the “top” (of the stack). The basic operations which define a stack are:

- init – create the stack.

- push – add an item to the top of the stack.

- pop – remove the last item added to the top of the stack.

- top – look at the item on the top of the stack without removing it.

- isEmpty – return whether the stack contains no more items.

A stack can also be implemented to have a maximum capacity. If the stack is full and does not contain enough slots to accept new entities, it is said to be an overflow – hence the phrase “stack overflow”. Likewise, if a pop operation is attempted on an empty stack then a “stack underflow” occurs.

Knowing that our stack is defined by the LIFO property and a number of basic operations, notably push and pop, we can easily implement a stack using arrays since arrays already provide push and pop operations.

Here’s what our simple stack looks like:

<?php

class ReadingList

{

protected $stack;

protected $limit;

public function __construct($limit = 10) {

// initialize the stack

$this->stack = array();

// stack can only contain this many items

$this->limit = $limit;

}

public function push($item) {

// trap for stack overflow

if (count($this->stack) < $this->limit) {

// prepend item to the start of the array

array_unshift($this->stack, $item);

} else {

throw new RunTimeException('Stack is full!');

}

}

public function pop() {

if ($this->isEmpty()) {

// trap for stack underflow

throw new RunTimeException('Stack is empty!');

} else {

// pop item from the start of the array

return array_shift($this->stack);

}

}

public function top() {

return current($this->stack);

}

public function isEmpty() {

return empty($this->stack);

}

}In this example, I’ve used array_unshift() and array_shift(), rather than array_push() and array_pop(), so that the first element of the stack is always the top. You could use array_push() and array_pop() to maintain semantic consistency, in which case, the Nth element of the stack becomes the top. It makes no difference either way since the whole purpose of an abstract data type is to abstract the manipulation of the data from its actual implementation.

Let’s add some items to the stack:

<?php

$myBooks = new ReadingList();

$myBooks->push('A Dream of Spring');

$myBooks->push('The Winds of Winter');

$myBooks->push('A Dance with Dragons');

$myBooks->push('A Feast for Crows');

$myBooks->push('A Storm of Swords');

$myBooks->push('A Clash of Kings');

$myBooks->push('A Game of Thrones');To remove some items from the stack:

<?php

echo $myBooks->pop(); // outputs 'A Game of Thrones'

echo $myBooks->pop(); // outputs 'A Clash of Kings'

echo $myBooks->pop(); // outputs 'A Storm of Swords'Let’s see what’s at the top of the stack:

<?php

echo $myBooks->top(); // outputs 'A Feast for Crows'What if we remove it?

<?php

echo $myBooks->pop(); // outputs 'A Feast for Crows'And if we add a new item?

<?php

$myBooks->push('The Armageddon Rag');

echo $myBooks->pop(); // outputs 'The Armageddon Rag'You can see the stack operates on a last in first out basis. Whatever is last added to the stack is the first to be removed. If you continue to pop items until the stack is empty, you’ll get a stack underflow runtime exception.

PHP Fatal error: Uncaught exception 'RuntimeException' with message 'Stack is empty!' in /home/ignatius/Data Structures/code/array_stack.php:33

Stack trace:

#0 /home/ignatius/Data Structures/code/example.php(31): ReadingList->pop()

#1 /home/ignatius/Data Structures/code/array_stack.php(54): include('/home/ignatius/...')

#2 {main}

thrown in /home/ignatius/Data Structures/code/array_stack.php on line 33

Oh, hello… PHP has kindly provided a stack trace showing the program execution call stack prior and up to the exception!

The SPLStack

The SPL extension provides a set of standard data structures, including the SplStack class (PHP5 >= 5.3.0). We can implement the same object, although much more tersely, using an SplStack as follows:

<?php

class ReadingList extends SplStack

{

}The SplStack class implements a few more methods than we’ve originally defined. This is because SplStack is implemented as a doubly-linked list, which provides the capacity to implement a traversable stack.

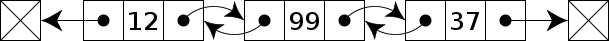

A linked list, which is another abstract data type itself, is a linear collection of objects (nodes) used to represent a particular sequence, where each node in the collection maintains a pointer to the next node in collection. In its simplest form, a linked list looks something like this:

![]()

In a doubly-linked list, each node has two pointers, each pointing to the next and previous nodes in the collection. This type of data structure allows for traversal in both directions.

Nodes marked with a cross (X) denotes a null or sentinel node – which designates the end of the traversal path (i.e. the path terminator).

Since ReadingList is implemented as an SplStack, we can traverse the stack forward (top-down) and backward (bottom-up). The default traversal mode for SplStack is LIFO:

<?php

// top-down traversal

// default traversal mode is SplDoublyLinkedList::IT_MODE_LIFO|SplDoublyLinkedList::IT_MODE_KEEP

foreach ($myBooks as $book) {

echo $book . "n"; // prints last item first!

}To traverse the stack in reverse order, we simply set the iterator mode to FIFO (first in, first out):

<?php

// bottom-up traversal

$myBooks->setIteratorMode(

SplDoublyLinkedList::IT_MODE_FIFO|SplDoublyLinkedList::IT_MODE_KEEP

);

foreach ($myBooks as $book) {

echo $book . "n"; // prints first added item first

}Queues

If you’ve ever been in a line at the supermarket checkout, then you’ll know that the first person in line gets served first. In computer terminology, a queue is another abstract data type, which operates on a first in first out basis, or FIFO. Inventory is also managed on a FIFO basis, particularly if such items are of a perishable nature.

The basic operations which define a queue are:

- init – create the queue.

- enqueue – add an item to the “end” (tail) of the queue.

- dequeue – remove an item from the “front” (head) of the queue.

- isEmpty – return whether the queue contains no more items.

Since SplQueue is also implemented using a doubly-linked list, the semantic meaning of top and pop are reversed in this context. Let’s redefine our ReadingList class as a queue:

<?php

class ReadingList extends SplQueue

{

}

$myBooks = new ReadingList();

// add some items to the queue

$myBooks->enqueue('A Game of Thrones');

$myBooks->enqueue('A Clash of Kings');

$myBooks->enqueue('A Storm of Swords');SplDoublyLinkedList also implements the ArrayAccess interface so you can also add items to both SplQueue and SplStack as array elements:

<?php

$myBooks[] = 'A Feast of Crows';

$myBooks[] = 'A Dance with Dragons';To remove items from the front of the queue:

<?php

echo $myBooks->dequeue() . "n"; // outputs 'A Game of Thrones'

echo $myBooks->dequeue() . "n"; // outputs 'A Clash of Kings'enqueue() is an alias for push(), but note that dequeue() is not an alias for pop(); pop() has a different meaning and function in the context of a queue. If we had used pop() here, it would remove the item from the end (tail) of the queue which violates the FIFO rule.

Similarly, to see what’s at the front (head) of the queue, we have to use bottom() instead of top():

<?php

echo $myBooks->bottom() . "n"; // outputs 'A Storm of Swords'Summary

In this article, you’ve seen how the stack and queue abstract data types are used in programming. These data structures are abstract, in that they are defined by the operations that can be performed on itself, thereby creating a wall between its implementation and the underlying data.

These structures are also constrained by the effect of such operations: You can only add or remove items from the top of the stack, and you can only remove items from the front of the queue, or add items to the rear of the queue.

Image by Alexandre Dulaunoy via Flickr

Frequently Asked Questions (FAQs) about PHP Data Structures

What are the different types of data structures in PHP?

PHP supports several types of data structures, including arrays, objects, and resources. Arrays are the most common and versatile data structures in PHP. They can hold any type of data, including other arrays, and can be indexed or associative. Objects in PHP are instances of classes, which can have properties and methods. Resources are special variables that hold references to external resources, such as database connections.

How can I implement a stack in PHP?

A stack is a type of data structure that follows the LIFO (Last In, First Out) principle. In PHP, you can use the SplStack class to implement a stack. You can push elements onto the stack using the push() method, and pop elements off the stack using the pop() method.

What is the difference between arrays and objects in PHP?

Arrays and objects in PHP are both types of data structures, but they have some key differences. Arrays are simple lists of values, while objects are instances of classes and can have properties and methods. Arrays can be indexed or associative, while objects always use string keys. Arrays are more versatile and easier to use, while objects provide more structure and encapsulation.

How can I use data structures to improve the performance of my PHP code?

Using the right data structure can significantly improve the performance of your PHP code. For example, if you need to store a large number of elements and frequently search for specific elements, using a hash table or a set can be much faster than using an array. Similarly, if you need to frequently add and remove elements at both ends, using a deque can be more efficient than using an array.

What is the SplDoublyLinkedList class in PHP?

The SplDoublyLinkedList class in PHP is a data structure that implements a doubly linked list. It allows you to add, remove, and access elements at both ends of the list in constant time. It also provides methods for iterating over the elements in the list, and for sorting the elements.

How can I implement a queue in PHP?

A queue is a type of data structure that follows the FIFO (First In, First Out) principle. In PHP, you can use the SplQueue class to implement a queue. You can enqueue elements onto the queue using the enqueue() method, and dequeue elements off the queue using the dequeue() method.

What is the difference between a stack and a queue in PHP?

A stack and a queue are both types of data structures, but they have a key difference in how elements are added and removed. A stack follows the LIFO (Last In, First Out) principle, meaning that the last element added is the first one to be removed. A queue, on the other hand, follows the FIFO (First In, First Out) principle, meaning that the first element added is the first one to be removed.

How can I use the SplHeap class in PHP?

The SplHeap class in PHP is a data structure that implements a heap. A heap is a type of binary tree where each parent node is less than or equal to its child nodes. You can use the SplHeap class to create a min-heap or a max-heap, and to add, remove, and access elements in the heap.

What are the benefits of using data structures in PHP?

Using data structures in PHP can provide several benefits. They can help you organize your data in a more efficient and logical way, which can make your code easier to understand and maintain. They can also improve the performance of your code, especially when dealing with large amounts of data or complex operations.

How can I implement a binary tree in PHP?

A binary tree is a type of data structure where each node has at most two children, referred to as the left child and the right child. In PHP, you can implement a binary tree using a class that has properties for the value of the node and the left and right children. You can then use methods to add, remove, and search for nodes in the tree.

Ignatius Teo is a freelance PHP programmer and has been developing n-tier web applications since 1998. In his spare time he enjoys dabbling with Ruby, martial arts, and playing Urban Terror.