This article is part of a web development series from Microsoft. Thank you for supporting the partners who make SitePoint possible.

ES6 brings the biggest changes to JavaScript in a long time, including several new features for managing large and complex codebases. These features, primarily the import and export keywords, are collectively known as modules.

If you’re new to JavaScript, especially if you come from another language that already had built-in support for modularity (variously named modules, packages, or units), the design of ES6 modules may look strange. Much of the design emerged from solutions the JavaScript community devised over the years to make up for that lack of built-in support.

We’ll look at which challenges the JavaScript community overcame with each solution, and which remained unsolved. Finally, we’ll see how those solutions influenced ES6 module design, and how ES6 modules position themselves with an eye towards the future.

Key Takeaways

- ES6 modules introduce import and export keywords, revolutionizing JavaScript’s approach to large and complex codebases by building on historical solutions to modularity challenges.

- The evolution of JavaScript modules from global scope patterns like the Object Literal and IIFE/Revealing Module patterns to more structured systems such as CommonJS and AMD reflects a broader shift towards efficient, encapsulated code management.

- CommonJS, initially designed for server-side JavaScript, influenced the syntax of ES6 modules but lacked support for asynchronous loading, which is crucial for performance in web environments.

- AMD (Asynchronous Module Definition) addressed the asynchronous loading limitations of CommonJS, setting the stage for the syntax and functionality improvements found in ES6 modules.

- Despite the advancements in module technology with ES6, practical implementation challenges persist, particularly around browser support and the need for transpilation and asynchronous loading tools.

First the <script> Tag, Then Controversy

At first, HTML limited itself to text oriented elements, which got processed in a very static manner. Mosaic, one of the most popular of the early browsers, wouldn’t display anything until all HTML had finished downloading. On an early ‘90s dial up connection, this could leave a user staring at a blank screen for literally minutes.

Netscape Navigator exploded in popularity almost as soon as it appeared in the mid to late ‘90s. Like a lot of current disruptive innovators, Netscape pushed boundaries with changes that weren’t universally liked . One of Navigator’s many innovations was rendering HTML as it downloaded, allowing users to begin reading a page as soon as possible, signaling the end for Mosaic in the process.

In a famous 10 day period in 1995, Brendan Eich created JavaScript for Netscape. They didn’t originate the idea of dynamically scripting a web page – ViolaWWW preceded them by 5 years – but much like Isaac Singer’s sewing machine, their popularity made them synonymous with the concept.

The implementation of the <script> tag went back to blocking HTML download and rendering. The limited communication resources commonly available at the time couldn’t handle fetching two data sources simultaneously, so when the browser saw <script> in the markup, it would pause HTML execution and switch to handling JS. In addition, any JS actions that affected HTML rendering, done via the browser-supplied API called DOM, placed a computational strain on even that day’s cutting edge Pentium CPUs. So when the JavaScript had finished downloading, it would parse and execute, and only after that pick up processing HTML where it had left off.

At first, very few coders did any substantial JS work. Even the name suggested that JavaScript was a lesser citizen compared to its server-side relatives like Java and ASP. Most JavaScript around the turn of the century limited itself to client side conditions the server couldn’t affect – often simple form activities like putting focus into the first field, or validating form input prior to submitting. The most common meaning of AJAX still referred to the caustic household cleaner, and almost all nontrivial actions required the full HTTP round trip to the server and back, so almost all web developers were backenders who looked down on the “toy” language.

Did you catch the gotcha in the last paragraph? Validating one form input might be simple, but validating multiple inputs on multiple forms gets complicated – and sure enough, so did JS codebases. Just as fast as it became apparent that client side scripting had undeniable usability benefits, so too the problems with vanilla script tags emerged: unpredictability with notification of DOM readiness; variable collisions in file concatenation; dependency management; you name it.

JS developers had a very easy time finding jobs, and a very hard time enjoying them. When jQuery appeared in 2006, developers adopted it warmly. Today, 65 to 70% of the top 10 million websites have jQuery installed. But it never intended and could offer little to solve the architectural issues: the “toy” language had made it to the big time, and needed big time skills.

What Exactly Did We Need?

Fortunately, other languages had already hit this complexity barrier, and found a solution: modular programming. Modules encouraged lots of best practices:

- Separation: Code needs to be separated into smaller chunks in order to be understandable. Best practices recommend these chunks should take the form of files.

- Composability: You want code in one file but reused in many others. This promotes flexibility in a codebase.

- Dependency management: 65% of sites might have jQuery installed, but what if your product is a site add-on, and needs a specific version? You want to reuse the installed version if it’s suitable, and load it if it’s not.

- Namespace management: Similar to dependency management – can you move the location of a file without rewriting core code?

- Consistent implementation: Everybody should not come up with their own solution to the same problems.

Early solutions

Each solution to these problems which JavaScript developers came up with had an influence on the structure of ES6 modules. We’ll review the major milestones in their evolution, and what the community learned at each step, finally showing the results in the form of today’s ES6 modules.

- Object Literal pattern

- IIFE/Revealing Module pattern

- CommonJS

- AMD

- UMD

Object Literal pattern

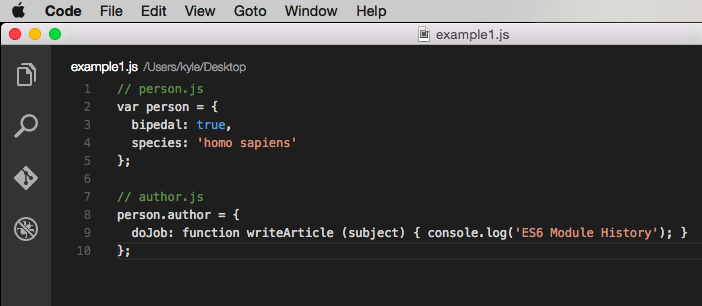

JavaScript already has a structure built in for organization: the object. The object literal syntax got used as an early pattern to organize code:

<!DOCTYPE html>

<html>

<head>

<script src="person.js"></script>

<script src="author.js"></script>

</head>

<body>

<script>

person.author.doJob('ES6 module history');

</script>

</body>

<script>

// shared scope means other code can inadvertently destroy ours

var person ='all gone!';

</script>

</html>

What it offered

This approach’s primary benefit was ease in understanding and implementation. Many other aspects were not at all easy.

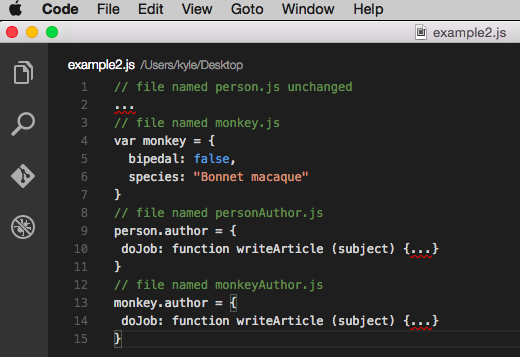

What held it back

It relied on a variable in the global scope (person) as its root; if some other JS on the page declared a variable by the same name, your code would disappear without a trace! In addition, there’s no reusability – if we wanted to support a monkey banging on a typewriter, we’d have to duplicate the author.js file:

Last, the order that files load in is critical. All the versions of author will error if person (or monkey) doesn’t exist first.

IIFE/Revealing Module pattern

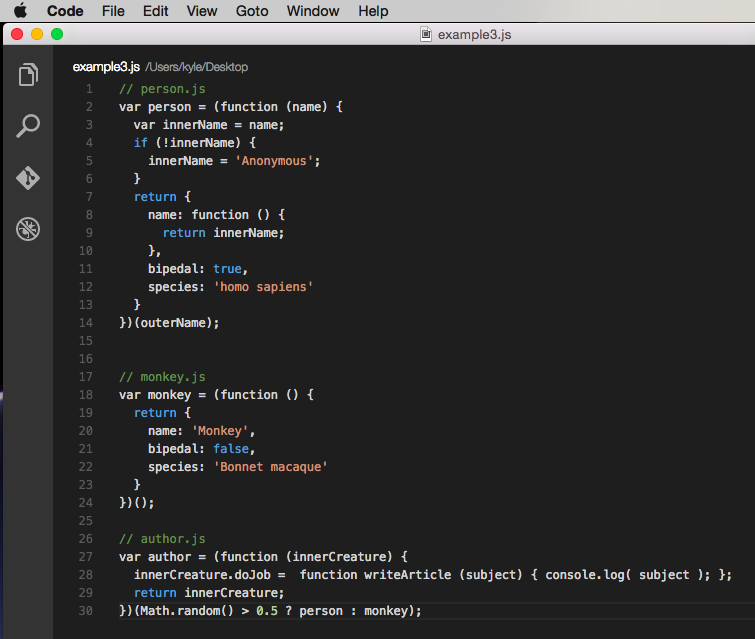

An IIFE (pronounced “iffy” according to the term’s coiner, Ben Alman) is an Immediately Invoked Function Expression. The function expression is the function keyword and body wrapped in the first set of parentheses. The second set of parens invokes the function, passing it whatever is inside as parameters to the function’s arguments. By returning an object from the function expression, we get the revealing module pattern:

// IIFEPage.html

<!DOCTYPE html>

<html>

<head>

<script>

// set init values

var outerName ='Ross';

</script>

<script src="person.js"></script>

<script src="monkey.js"></script>

<script src="author.js"></script>

<script>

// we can get rid of person after author has loaded....

person =undefined;

</script>

</head>

<body>

<script>

// ...and author will still work here

author.doJob('ES6 Module History');

</script>

</body>

</html>

What it offered

Due to closure, we have a lot of control inside the IIFE. For example, innerName is essentially private, because nothing outside can access it – we choose to reveal access to it through the object property called name. We have constructor-like functionality, like setting a default value for name inside person. We have control of importing dependencies – if we need to rename something from the outside world (often jQuery’s “$” alias), we can take the exterior name in the arguments and give it any arbitrary name in the interior. Finally, combining these techniques allows us to implement the decorator pattern in author, which begins to decouple that code from its dependence on the creature which is passed to it.

What held it back

The IIFE/Revealing Module offered many features, and as we’ll see, had a strong influence on AMD. But the syntax was ugly, it was an ad-hoc hack without a standard, and it still relies heavily on global scope.

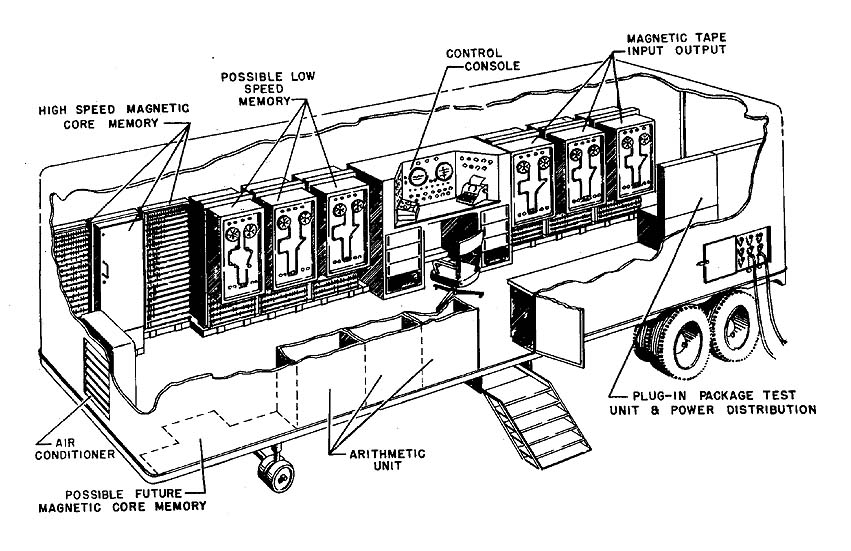

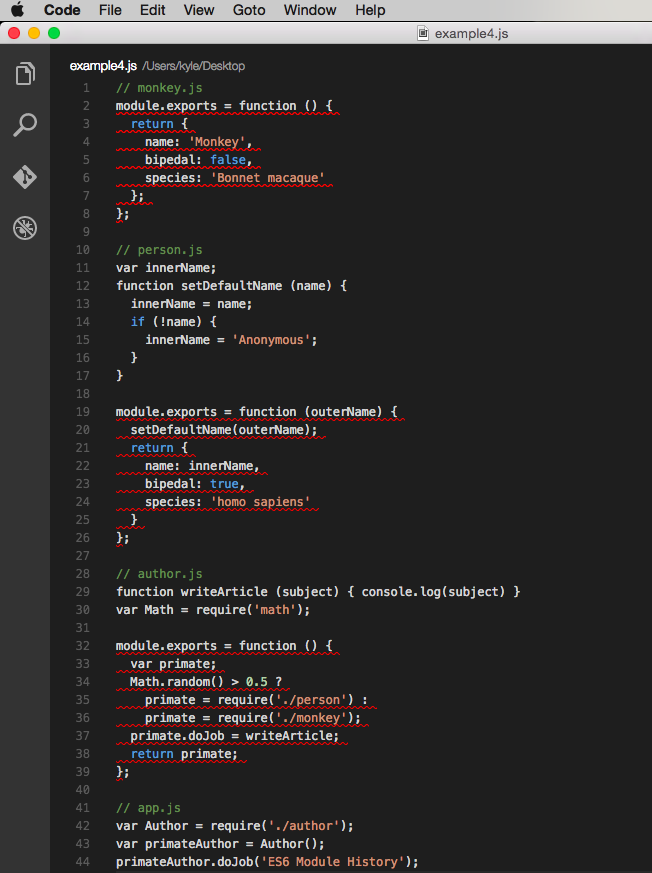

CommonJS

In a simultaneous branch of evolution, JavaScript moved server side. Spoiler alert: while Node won the battle, there were many early contenders, including Ringo, Narwhal, and Wakanda. These developers knew the modularity issues client-side JS had gone through, and wanted to skip the confusion and inefficiency of the early period. So they formed a working group to develop a standard for server side modules.

The old joke that a camel is a horse designed by committee is funny because it’s painfully true: committees do some things well, but design is not one of them, and the CommonJS group inevitably got bogged down in bikeshedding and other group anti-patterns. During this time though, Node created their own module implementation using some ideas from CommonJS. Due to Node’s overwhelming server side popularity, the Node format came to be known (incorrectly) as CommonJS, and thrives today.

Intended as a server side solution, CommonJS assumes a compatible script loader as a prerequisite. This script loader must support functions named require and module.export, which transport modules in and out of each other (and also a syntactic shortcut to module.export named ‘export’, which we won’t cover here). Although it never gained popularity in the browser, several tools did support loading it there, so we’ll look at pre-compiling via Browserify as an example.

// commonJSPage.html

<!DOCTYPE html>

<html>

<head>

<script src="out/bundle.js"></script>

</head>

<body>

<script>

</script>

</body>

</html>

What it offered

Given that external requirement for a loader, the syntax is clear and concise, and directly influenced ES6 module syntax. Also, modules limit variable scope to within themselves; it’s no longer even possible to declare a global.

What held it back

As a downside, CJS doesn’t play well in an asynchronous environment. All require calls have to be executed before code can proceed. This explains the pre-compilation step earlier, but it also makes “lazy loading” really difficult – all code needs to be loaded before any execution can begin. Not awesome on slow connections or underpowered devices.

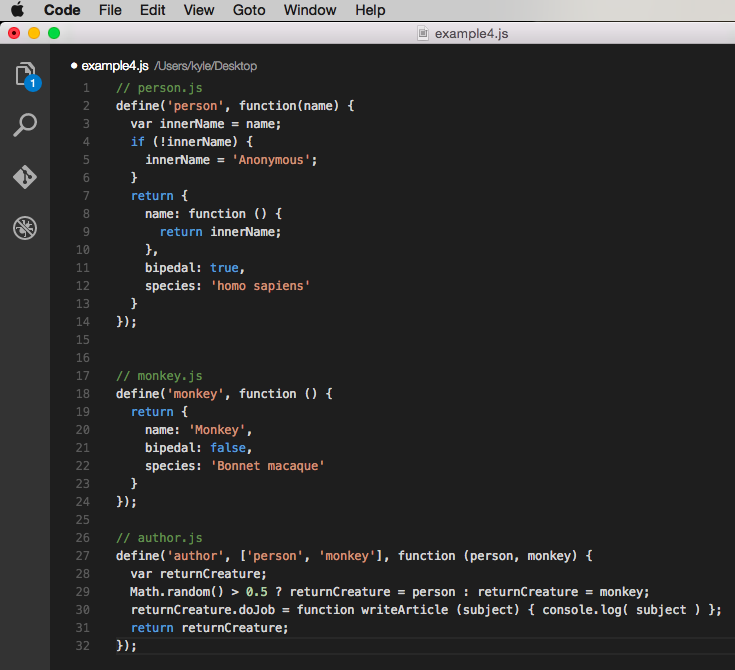

AMD

In a tremendous irony, while the CommonJS working group failed to agree on a server side standard, the discussions did produce a consensus on a client side format. AMD, or Asynchronous Module Definition, emerged out of CommonJS discussions. Major players like IBM and the BBC got behind AMD, and given their influence (are you starting to see a theme here?) it quickly became the dominant format among practitioners of the brand new discipline called front end development.

AMD has a similar prerequisite for a script loader as CJS, although AMD only assumes support for a method named define. The define method takes three arguments: the name of the module being defined, an array of dependencies the module being defined needs in order to run, and a function to execute once all dependencies are available (which receives the dependencies as arguments, in the order they were declared). Only the function argument is required.

It’s also worth noting, while named modules are officially discouraged, and don’t add any benefit by themselves, they’re unfortunately common in practice. Once you use named modules, you’re forced to set baseUrl and paths for each module, losing the freedom to change your code’s location in the process. The example below shows named modules, but if you’re starting from scratch, don’t use them unless you have to (wrapping non-AMD libs like jQuery and Underscore in AMD syntax are likely candidates for valid use of named modules – see link above for more info).

AMD’s define method corresponds to CJS’ export, but AMD doesn’t specify an equivalent of the import or require method. Dependencies inside a module are handled by the second argument to define, and loading outside a module belonged to the script loader. For example, CurlJS named their loading method curl instead of require, and both methods take different arguments.

This format takes advantage of the fact that JavaScript operates in two passes: parsing, when the code is interpreted (and when syntax errors are found), followed by execution, where the code is run (and where runtime errors are encountered). During parsing, the target of a variable (like returnCreature on line 2 of author.js) does not yet have to exist; the code just needs to be syntactically correct. The responsibility of waiting to execute the function until all the dependencies have finished loading falls to the script loader.

// AMDPage.html

<!DOCTYPE html>

<html>

<head>

<script src="script/require.js"></script>

</head>

<body>

<script>

require.config({

baseUrl:"script",

paths: {

"person":"person"

}

});

require(["author"],function(author) {

author.doJob('ES6 Module History');

});

</script>

</body>

</html>

Pros and cons

AMD ruled hard for many years, but leaned heavily on an ugly syntax which required a lot of boilerplate punctuation. Its asynchronous nature, which the browser demanded, meant it couldn’t be statically analyzed, and there were other similar small reasons that it wasn’t the solution for everyone.

UMD

They say a good compromise is when no one ends up happy. Universal Module Definition was an attempt to mash AMD and CJS together, usually by wrapping CJS syntax inside an AMD compatible wrapper.

Pros and cons

Like most attempts at having cake and also eating it, it suffered from the drawbacks of both parents without providing a lot of benefits. Mentioned here for historical completeness, it did take the first step towards the holy grail of Isomorphic JavaScript, which can run on both the server and client.

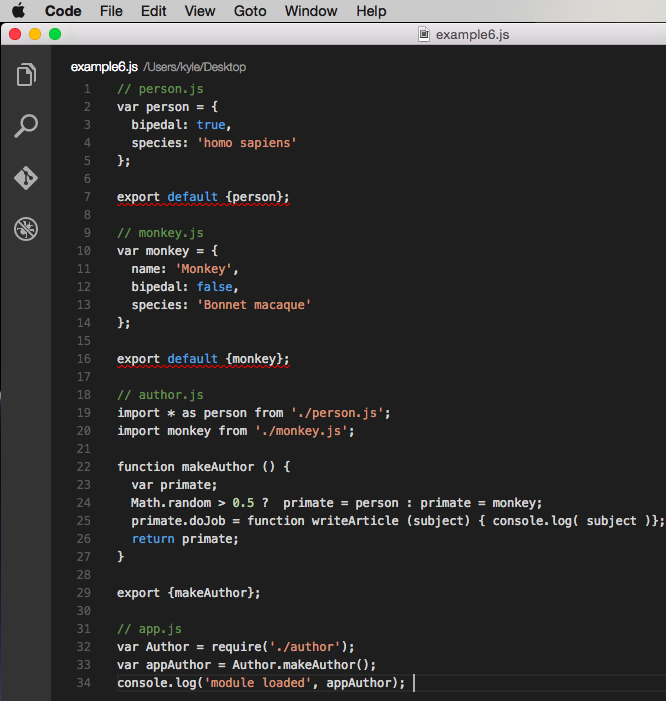

ES6 modules

The TC39 committee responsible for the design of ES6, the biggest change to the language in 15 years, learned the lessons of AMD and CJS well. ES6 modules will finally bring the built in support for modularity other languages have enjoyed for years, and include proposed features that make both front and back end developers happy. Here’s our example utilizing ES6 modules.

<!DOCTYPE html>

<html>

<head>

<script src="./out/bundle.js"></script>

</head>

<body>

<script>

console.log('page loaded');

</script>

</body>

</html>

Only one small problem remains: the front end ecosystem barely supports ES6 modules. No browser natively supports the new import and export keywords, or the proposed HTML5 module element. Tools called transpilers, like Babel and Traceur, can precompile ES6 modules into valid ES5 code, which today’s browsers can process; but that ES5 has to be wrapped in an asynchronous syntax and then handled by a script loader like RequireJS, Browserify or SystemJS.

Trying to pass even a trivial ES6 module through these two abstract layers, transpilation and asynchronous loading, tends to create implementation challenges. While putting together this example, I had a run-time (browser) error that a require statement couldn’t find its dependency. I knew Browserify sometimes needs the dot-slash directory prefix to identify modules (‘./author’), but that broke my Babel build, as I had it setup to run a directory above the Browserify bundle. I’ve had similar issues with Babel and Webpack in a production app.

Point is, this is bleeding edge stuff. It’s not impossible to implement, given time, but expect to spend more time on configuration and troubleshooting than with a more established technique, like AMD + RequireJS.

Conclusion

The history of JavaScript module techniques mirrors the explosion and evolution of the internet itself. Just as mainframes no longer controlled helpless terminals, centralized control organizations like standards committees could no longer issue orders and expect the multitudes to silently obey. Individual contributors devised their own solutions to the problems they thought most important, and popular adoption represented votes for those solutions.

After different implementations have explored permutations of an idea, standards follow, to capture the learning. This attitude – pithily summed up by Steve Jobs as “real artists ship” – embody the egalitarian and pragmatic attitude that makes modern web development so successful and exciting.

This article is part of the web development series from Microsoft tech evangelists and DevelopIntelligence on practical JavaScript learning, open source projects, and interoperability best practices including Microsoft Edge browser and the new EdgeHTML rendering engine. DevelopIntelligence offers instructor-led JavaScript Training and React Training for technical teams and organizations.

We encourage you to test across browsers and devices including Microsoft Edge – the default browser for Windows 10 – with free tools on dev.microsoftedge.com, including F12 developer tools — seven distinct, fully-documented tools to help you debug, test, and speed up your webpages. Also, visit the Edge blog to stay updated and informed from Microsoft developers and experts.

Frequently Asked Questions (FAQs) about ES6 Modules

What is the main difference between ES6 modules and CommonJS modules?

ES6 modules and CommonJS modules are both popular systems for managing JavaScript modules, but they have some key differences. The most significant difference is that ES6 modules use a static module structure, meaning the module structure is determined at compile time, not runtime. This allows for better static analysis, including tree shaking for eliminating unused exports and predicting what will be imported. On the other hand, CommonJS modules are dynamic, meaning they are loaded and interpreted at runtime, which can be more flexible but less efficient.

How can I use ES6 modules in Node.js?

Node.js has traditionally used the CommonJS module system, but it has added support for ES6 modules in more recent versions. To use ES6 modules in Node.js, you need to use the .mjs file extension or set “type”: “module” in your package.json file. Then, you can use import and export syntax as you would in a browser environment.

Can I use ES6 modules in older browsers?

Older browsers do not natively support ES6 modules. However, you can use tools like Babel and Webpack to transpile your code into a format that older browsers can understand. These tools will convert your ES6 module syntax into equivalent CommonJS or AMD syntax that is compatible with older browsers.

What is the benefit of using ES6 modules over global variables?

Using ES6 modules provides several benefits over using global variables. First, modules help avoid naming conflicts by keeping variables and functions scoped to the module they are defined in. Second, modules make it easier to organize and manage your code by breaking it up into smaller, self-contained pieces. Finally, modules can improve performance by allowing for lazy loading and more efficient bundling of code.

How can I import a default export from an ES6 module?

To import a default export from an ES6 module, you can use the import statement followed by the name you want to assign to the default export and the path to the module. For example, import myDefault from './myModule'; will import the default export from myModule.js and assign it to the variable myDefault.

Can I import multiple exports from an ES6 module?

Yes, you can import multiple exports from an ES6 module using the import statement. To import named exports, you can list them in curly braces after the import keyword. For example, import {export1, export2} from './myModule'; will import the named exports export1 and export2 from myModule.js.

Can I use ES6 modules with React?

Yes, you can use ES6 modules with React. In fact, using ES6 modules is the recommended way to import and export components in React. This allows you to take advantage of the benefits of modules, such as better code organization and more efficient bundling.

What is tree shaking and how does it relate to ES6 modules?

Tree shaking is a technique used in modern JavaScript bundlers like Webpack and Rollup to eliminate unused code from the final bundle. Because ES6 modules have a static structure, bundlers can analyze the imports and exports at compile time and exclude any exports that are not used. This can significantly reduce the size of the final bundle and improve performance.

Can I mix ES6 modules and CommonJS modules in the same project?

While it is technically possible to mix ES6 modules and CommonJS modules in the same project, it is generally not recommended. Mixing module systems can lead to confusion and unexpected behavior. If you need to use a CommonJS module in a project that uses ES6 modules, consider using a tool like Babel to transpile the CommonJS module to ES6 syntax.

How can I export multiple values from an ES6 module?

To export multiple values from an ES6 module, you can use the export keyword followed by the values you want to export. You can export any number of values, and they can be any type of JavaScript value, including functions, objects, and primitive values. For example, export {value1, value2, value3}; will export the values value1, value2, and value3 from the module.