We often see quite a bit of discussion of optimization techniques for various areas of front-end development. One area that probably gets more overlooked than it should is HTML.

I’m certainly not a member of the ‘clean, non-presentational markup’ camp. But I do think consistency in markup is needed, especially when working on code that many developers are going to touch.

In this post, I’ll introduce a neat little tool that I’ve revisited recently that I think many development teams would do well to consider using: HTML Inspector by Philip Walton.

Key Takeaways

- HTML Inspector is a highly customizable code quality tool that helps developers write better markup. It balances between the strict W3C validator and having no rules at all, providing a series of messages in your browser’s developer tools console to highlight HTML issues to address.

- HTML Inspector can be installed and used via the command line or in the browser. It can be run on any remote or local website in a few seconds, in any browser that has developer tools installed. It can also be customized to run on a specific website.

- The tool is unique in terms of HTML linting, with customizable warnings and the ability to write your own rules. It has no dependencies and runs on the live DOM in the browser, allowing testing on markup that gets added and modified dynamically by other scripts on the page.

- HTML Inspector is particularly useful for development teams, especially when new developers join for a short period of time. Customized rules can be set in place, and the new developer can run HTML Inspector with these rules, read the warnings in the console, and make necessary adjustments to their code.

What is HTML Inspector?

As Philip explains on the repo’s README, it’s:

a highly-customizable, code quality tool to help you (and your team) write better markup. It aims to find a balance between the uncompromisingly strict W3C validator and having absolutely no rules at all (the unfortunate reality for most of us).

Although I somewhat disagree with Philip’s statement that the W3C validator is “uncompromisingly strict” (doesn’t he remember XHTML?), I certainly agree that many teams of developers likely lack a set of logical in-house standards to keep their markup maintainable and up to date.

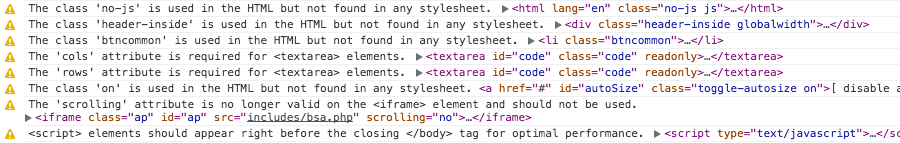

After running HTML inspector, you’ll get a series of messages in your browser’s developer tools console, letting you know what issues in your HTML you should consider addressing.

You can see an example of HTML Inspector’s output in the image above, which is taken from a test I ran on one of my older projects, which I knew would likely have some questionable markup.

Install and Run HTML Inspector

If you’re doing testing on your own non-production code, you can simply include the HTML Inspector script in any of your pages, at the bottom, then run it by calling the primary method. Below is a brief description of the install methods, and then I’ll explain an even better method to get it to run on any website, remote or local.

To install and use via the command-line, you can use NPM, which requires PhantomJS:

npm install -g html-inspectorOr you can install with Bower for use in the browser:

bower install html-inspectorIf you choose to download the tool manually, all you need to do is grab a copy of html-inspector.js from the project’s root directory, including it at the bottom of your document after all other scripts have loaded. Then you need to execute it, as shown below:

<script src="js/html-inspector.js"></script>

<script>

HTMLInspector.inspect();

</script>But unless you’re customizing the script, you don’t need to do any of the above. You can run it on any remote or local website in a few seconds, in any browser that has developer tools installed.

Open your browser’s developer tools console and run the following commands:

var htmlInsp = document.createElement('script');

htmlInsp.src = '//cdnjs.cloudflare.com/ajax/libs/html-inspector/0.8.1/html-inspector.js';

document.body.appendChild(htmlInsp);After those are executed, you can then run the following in the console:

HTMLInspector.inspect();Note: As pointed out in the comments, you have to run the final line as a separate command, after executing the first three lines, for this to work.

This script uses the HTML Inspector code as hosted on cdnjs.

In fact, I’ll make it even easier for you; you can create a bookmarklet with the code in this Gist. Once you have the bookmarklet added to your bookmarks bar, execute it on any website, open the console, then run HTMLInspector.inspect().

Try it on a really old project or pretty much any WordPress site if you want to see the kinds of warnings it spits out by default.

Of course, if you decide to customize HTML Inspector, you’ll need to download it and run your own configured version. So once you have your version ready, you can do the same as I’ve described above, but with a modified source URL.

HTML Inspector: The Great Parts

Here are some of the great things about this tool:

- It’s one of a kind; I haven’t seen another tool that’s still active that’s comparable in terms of HTML linting. (If you know of one, let me know in the comments!)

- Like other similar tools for CSS and JavaScript, you can customize it to only warn you for the rules you like.

- You can write your own rules, which is probably the best feature of all.

- No dependencies (note that the original blog post says it requires jQuery, but that was fixed about a week later).

- It runs on the live DOM in the browser, not merely on the initial static HTML. This is great because it allows you to test on markup that gets added and modified dynamically by other scripts on the page.

A Great Tool for Dev Teams

As Philip explains on the aforementioned launch post, a great benefit to using HTML Inspector comes on teams where you might have a number of developers join for a short period of time (e.g. interns and new hires).

So imagine a new, less experienced developer working on your projects for three months, as a summer intern or something. It might normally take such an employee the full three months to get used to your way of writing code. But with HTML Inspector, all you need to do is have your customized rules in place. The new developer then just needs to run HTML Inspector with your custom rules, then read the warnings in the console and make any necessary adjustments to his code.

Changing the Defaults

There are two ways to customize HTML Inspector: Changing the default values in the config settings or (as mentioned) writing your own rules. Let’s look first at the default settings.

Running HTMLInspector.inspect() with the default setup executes the tool with the default values. The inspect() method takes an optional config object to change these. You can override the values by doing something like this:

HTMLInspector.inspect({

domRoot: "main",

excludeRules: ["validate-elements", "validate-attributes"],

excludeElements: ["svg", "span", "input"],

onComplete: function(errors) {

errors.forEach(function(error) {

console.warn(error.message, error.context)

}

}

});Here I’ve decided not to validate elements or attributes and I’ve told the tool to exclude certain elements from being inspected.

For full details on modifying these defaults, check out the pertinent sections in the README file on the GitHub repo:

Writing Custom Rules

As mentioned, you might not agree with HTML Inspector’s default inspection warnings. If that’s the case, or if you want to add to those, the most powerful part of the tool is the ability to write your own rules.

You can do this using the following structure:

HTMLInspector.rules.add(name, [config], func)The README gives the example of a dev team that previously used two specific data-* attribute namespaces that are now internally deprecated in favor of another method. Here’s how the code would look:

HTMLInspector.rules.add(

"deprecated-data-prefixes",

{

deprecated: ["foo", "bar"]

},

function(listener, reporter, config) {

// register a handler for the `attribute` event

listener.on('attribute', function(name, value, element) {

var prefix = /data-([a-z]+)/.test(name) && RegExp.$1

// return if there's no data prefix

if (!prefix) return

// loop through each of the deprecated names from the

// config array and compare them to the prefix.

// Warn if they're the same

config.deprecated.forEach(function(item) {

if (item === prefix) {

reporter.warn(

"deprecated-data-prefixes",

"The 'data-" + item + "' prefix is deprecated.",

element

)

}

})

}

)

});(Take note that Philip is a madman and thus doesn’t use semi-colons at the end of lines!)

I’ve only scratched the surface of what’s possible with custom rules. I’m hoping to find some time to delve deeper into custom rules later on, and maybe I’ll write about it. In the meantime you can check out the relevant parts of the README for more info:

Conclusion

As is true of many developer tools, you’ll need a modern browser for HTML Inspector to work (for example, the browser needs to support ES5 methods, the CSSOM, and console.warn()).

As mentioned, I haven’t seen too many tools like this one (besides a regular validator). There was a general HTML Lint tool that seems to have disappeared. But other than that, I can’t remember another tool like this one – and certainly not one that’s as customizable and that runs on a live DOM.

If you’ve tried out HTML Inspector or know of another similar tool, feel free to let us know in the discussion.

Frequently Asked Questions (FAQs) about HTML Inspector

What is an HTML Inspector and why is it important?

An HTML Inspector is a tool that allows you to view and edit the HTML code of a webpage. It is a crucial tool for web developers and designers as it helps them understand the structure of a webpage, debug issues, and test new code without making permanent changes to the live site. It also aids in learning and understanding how different elements and styles are applied to a webpage.

How can I access the HTML Inspector?

You can access the HTML Inspector through your browser’s developer tools. For instance, in Google Chrome, you can right-click on any element on a webpage and select ‘Inspect’ from the dropdown menu. This will open the HTML Inspector, highlighting the code for the selected element.

Can I make permanent changes to a webpage using HTML Inspector?

No, the changes you make using HTML Inspector are temporary and only visible on your local browser. Once you refresh the page, all changes will be lost. This feature is particularly useful for testing and debugging code without affecting the live site.

How can I use HTML Inspector to learn web development?

HTML Inspector provides a hands-on approach to learning web development. By inspecting different elements on a webpage, you can understand how they are structured and styled. You can also experiment by editing the HTML and CSS code, observing the changes in real-time.

What is the difference between HTML Inspector and a website analyzer?

While both tools are used for inspecting webpages, they serve different purposes. An HTML Inspector allows you to view and edit the HTML and CSS code of a webpage. On the other hand, a website analyzer provides a comprehensive analysis of a webpage, including its performance, SEO, accessibility, and more.

Can I use HTML Inspector on any webpage?

Yes, you can use HTML Inspector on any webpage. However, some websites may have measures in place to prevent their code from being viewed or edited.

How can I use HTML Inspector to debug issues?

HTML Inspector allows you to identify and fix issues in your code. By inspecting the elements, you can see how they are structured and styled, and identify any inconsistencies or errors. You can also edit the code in real-time to test potential fixes.

Can I use HTML Inspector on mobile devices?

While the process is more complex, it is possible to use HTML Inspector on mobile devices. Some browsers, like Google Chrome, offer remote debugging features that allow you to inspect a webpage on a mobile device from your desktop.

How can I use HTML Inspector to improve my website’s performance?

HTML Inspector can help you identify elements that may be affecting your website’s performance. For instance, you can check if images are properly optimized, if scripts are loading efficiently, or if there are any unnecessary elements that could be removed.

Can I use HTML Inspector to view a website’s SEO elements?

Yes, you can use HTML Inspector to view a website’s SEO elements, such as meta tags, alt attributes, and header tags. However, for a more comprehensive SEO analysis, you may want to use a dedicated SEO tool.

Louis is a front-end developer, writer, and author who has been involved in the web dev industry since 2000. He blogs at Impressive Webs and curates Web Tools Weekly, a newsletter for front-end developers with a focus on tools.

Published in

·APIs·Authentication·CMS & Frameworks·Frameworks·Laravel·Libraries·Patterns & Practices·PHP·Security·Web Services·February 28, 2017