How to Make Ajax Applications Google-Crawlable

Key Takeaways

- Ajax applications can be made crawlable by Google by modifying the URL to include an exclamation mark following the hash, serving a snapshot of the full page, and adding these new links to the sitemap. However, this process can be complex and may require creating static versions of pages or using a system like HtmlUnit.

- Progressive enhancement techniques can also be used to make Ajax applications crawlable. These techniques involve creating pages that can work without JavaScript, which can then be indexed by search engines. Ajax can then be used to enhance the user experience for those with JavaScript enabled.

- While Google’s crawling system can be helpful for Ajax applications, it is not the only solution. It’s important to ensure that Ajax content is accessible and crawlable, as not all search engine bots can execute JavaScript. Strategies to make Ajax applications more SEO-friendly include using pre-rendering or server-side rendering, and building the site with basic HTML and CSS first before adding Ajax functionality.

Ajax changed the Web. Microsoft pioneered the technologies back in 1999, but an explosion of Web 2.0 applications appeared after Jesse James Garrett devised the acronym in 2005.

Although it makes web applications slicker and more responsive, Ajax-powered content cannot (always) be indexed by search engines. Crawlers are unable run JavaScript content-updating functions. In extreme cases, all Google might index is:

<html>

<head>

<title>My Ajax-powered page</title>

<script type="text/JavaScript" src="application.js"></script>

</head>

<body>

</body>

</html>

It’s enough to make an SEO expert faint.

Google to the rescue?

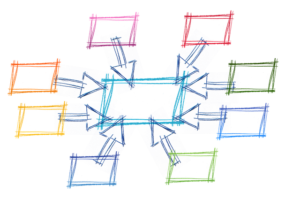

Google has devised a new technique to make Ajax applications crawlable. It’s a little complex, but here’s a summary of the implementation.

1. Tell the crawler about your Ajax links

Assume your application retrieves data via an Ajax call to this address:

www.mysite.com/ajax.php#state

You’d need to modify that URL and add an exclamation mark following the hash:

www.mysite.com/ajax.php#!state

Links in your HTML will be followed, but you should add these new links your sitemap if they’re normally generated within the JavaScript code.

2. Serving Ajax content

The Google crawler will translate your Ajax URL and request the following page:

www.mysite.com/ajax.php?_escaped_fragment_=state

Note that arguments are escaped; for example, ‘&’ will be passed as ‘%26’. You will therefore need to unescape and parse the string. PHP’s urldecode() function will do nicely.

Your server must now return a snapshot of the whole page, that is, what the HTML code would look like after the Ajax call had executed. In other words, it’s a duplication of what you would see in Firebug’s HTML window following a dynamic update.

This should be relatively straightforward if your Ajax requests normally return HTML via a PHP or ASP.NET process. However, the situation becomes a little more complex if you normally return XML and JSON that’s parsed by JavaScript and inserted into the DOM. Google suggests creating static versions of your pages or using a system such as HtmlUnit to programmatically fetch the post-JavaScript execution HTML.

That’s the essence of the technique, but I’d recommend reading Google’s documentation — there are several other issues and edge cases.

Is this progress(ive)?

Contrary to popular opinion, sites using Ajax can be indexed by search engines … if you adopt progressive enhancement techniques. Pages that work without JavaScript will be indexed by Google and other search engines. You can then apply a sprinkling of Ajax magic to progressively enhance the user experience for those who have JavaScript enabled.

For example, assume you want to page through the SitePoint library ten books at a time. A standard HTML-only request/response page would be created with navigation links; for example:

library.php

<html> <head> <title>SitePoint library</title> </head> <body> <table id="library"> <?php // show books at appropriate page ?> </table> <ul> <li><a href="library.php?page=-1">BACK</a></li> <li><a href="library.php?page=1">NEXT</a></li> </ul> </body> </html>

The page can be crawled by Google and the links will be followed. Users without JavaScript can also use the system. JavaScript progressive enhancements could now be added to:

- check for the existence of a table with the ID of “library”

- add event handlers to the navigation links and, once clicked

- cancel the standard navigation event and start an Ajax call. This would retrieve new data and update the table without a full page refresh.

While I welcome Google’s solution, it seems like a complex sledgehammer to crack a tiny nut. If you’re confused by techniques such as progressive enhancement and Hijax, you’ll certainly have trouble implementing Google’s crawling system. However, it might help those with monolithic Ajax applications who failed to consider SEO until it was too late.

Frequently Asked Questions (FAQs) about Google Crawl and Index AJAX Applications

How does Googlebot interact with AJAX applications?

Googlebot interacts with AJAX applications by crawling and indexing them. It uses a process called rendering to interpret the content of a page, including AJAX-based elements. The bot fetches the necessary resources, executes JavaScript, and then processes the rendered HTML for indexing. However, it’s important to note that not all search engine bots can execute JavaScript, so it’s crucial to ensure your AJAX content is accessible and crawlable.

What is the difference between traditional crawling and AJAX crawling?

Traditional crawling involves search engine bots scanning the HTML of a webpage to index its content. AJAX crawling, on the other hand, requires the bot to execute JavaScript to access and index the content. This is because AJAX content is dynamically loaded and may not be immediately visible in the HTML.

Why is my AJAX content not appearing in Google search results?

If your AJAX content is not appearing in Google search results, it could be due to several reasons. One common issue is that Googlebot may not be able to execute the JavaScript required to load your AJAX content. This could be due to complex or inefficient code, or because the bot is being blocked from accessing necessary resources. It’s also possible that Googlebot is encountering errors when trying to render your page.

How can I make my AJAX applications more SEO-friendly?

There are several strategies to make your AJAX applications more SEO-friendly. One approach is to use progressive enhancement, which involves building your site with basic HTML and CSS first, then adding AJAX functionality on top. This ensures that your content is accessible even if JavaScript is not executed. Another strategy is to use pre-rendering or server-side rendering to generate a static HTML snapshot of your AJAX content for bots to crawl.

What is the role of the ‘escaped fragment’ in AJAX crawling?

The ‘escaped fragment’ was a method proposed by Google to make AJAX applications crawlable. It involved serving a static HTML snapshot of a page to bots when they encountered a URL with a ‘hashbang’ (#!). However, Google deprecated this method in 2015 and now recommends using progressive enhancement or dynamic rendering instead.

How can I test if Googlebot can render my AJAX content?

You can use Google’s URL Inspection tool in Search Console to test how Googlebot renders your page. This tool shows you the rendered HTML as seen by Googlebot, any loading issues encountered, and whether any resources were blocked.

Can other search engine bots crawl AJAX content?

While Googlebot is capable of crawling and indexing AJAX content, not all search engine bots have this capability. Therefore, it’s important to ensure your content is accessible without JavaScript to maximize its visibility in all search engines.

How does AJAX affect page load speed and SEO?

AJAX can improve page load speed by allowing content to be loaded dynamically without a full page refresh. However, if not implemented correctly, it can also slow down your site and make it harder for search engine bots to crawl and index your content. Therefore, it’s important to optimize your AJAX code for both performance and SEO.

What is the impact of AJAX on mobile SEO?

AJAX can enhance the user experience on mobile devices by enabling dynamic content loading and interactive features. However, it’s important to ensure your AJAX content is mobile-friendly and accessible to Googlebot, as Google uses mobile-first indexing.

How can I monitor the performance of my AJAX applications in Google Search?

You can use Google Search Console to monitor the performance of your AJAX applications in Google Search. This tool provides data on your site’s visibility in search results, any crawling or indexing issues, and how users are interacting with your site.

Craig is a freelance UK web consultant who built his first page for IE2.0 in 1995. Since that time he's been advocating standards, accessibility, and best-practice HTML5 techniques. He's created enterprise specifications, websites and online applications for companies and organisations including the UK Parliament, the European Parliament, the Department of Energy & Climate Change, Microsoft, and more. He's written more than 1,000 articles for SitePoint and you can find him @craigbuckler.

Published in

·Canvas & SVG·Design·Design & UX·HTML & CSS·HTML5·Illustration·Technology·January 21, 2015

Published in

·Cloud·Community·Open Source·Performance & Scaling·PHP·Programming·Web·December 28, 2013