Usability Testing Goals: Knowing ‘Why’ Before ‘How’

Key Takeaways

- Usability testing should begin with a clear understanding of goals and objectives, which includes knowing what you’re testing for and defining your metrics.

- Categorizing your goals can be beneficial, and this can be done by asking the right questions about your product, users, success measures, competition, research needs, and timing and scope.

- Once your goals are set, you need to determine what type of feedback will be most useful for your results. This could be graphs, rating scales, personal user accounts, numbers, written responses or sound bites, depending on who will be reviewing the data.

- Usability metrics, such as success rate, error rate, time to completion, and subjective measures, are quantitative data that can help understand user performance on tasks. However, these metrics should be used in conjunction with qualitative data to avoid the risk of numbers fetishism.

Figure out your direction before testing designs

Like all significant undertakings, you need to go into usability testing with a plan. But as you’ll see, a little extra time planning at the beginning can pay off in the end.

By following a few simple guidelines, you’ll know what to expect, what to look for, and what to take away from your usability testing.

Obviously everyone wants to optimize the results of their usability testing. But to do that, you must first know what you’re testing for. We’ll explain how to define your testing objectives and set your usability metrics.

Defining Your Usability Goals

There’s no question about what Waldo looks like before you open the book, but all too often companies jump the gun with their usability tests by not knowing what they’re looking for, or even why.

For this, the first step in usability research should always be knowing what you are trying to find out — but that’s not as easy as it sounds. Firstly you need to categorize your testing goals and know what type of data is most appropriate.

1. Categorizing Your Goals

Sometimes it helps to break out your different objectives into categories. Michael Margolis, a UX Researcher at Google Ventures Design Studio, believes the first step to determining objectives is knowing the right questions to ask (he lists them in categories).

It helps to first hold a preliminary meeting with stakeholders to gauge how much they know about the product (features, users, competitors, etc) as well as constraints (schedule, resourcing, etc).

Once you know that, you can ask a set of questions to help focus the team on research questions (“Why do people enter the website and not watch the demo video?”) versus just dictating methods (“We need to do focus groups now!).

- Relevant Product Information — Do you know the history of your product? Do you know what’s in store for the future? Now would be a good time to find out.

- Users — Who uses your product? Who do you want to use your product? Be as specific as possible: demographics, location, usage patterns — whatever you can find out.

- Success — What is your idea of success for this product? It it sales, downloads, pageviews, engagement or some other measure? Make sure the entire team is on the same page.

- Competitors — Who will be your biggest competition? How do you compare? What will your users be expecting based on your competition?

- Research — This might seems like a no-brainer when planning your research, but what do you want to know? What data would help your team best? Is that research already available to you so that you’re not wasting your time?

- Timing and Scope — What time frame are you working with for collecting your data? When is it due?

Once you’ve finished your benchmark questions, you can reverse the roles and have your team write down their questions. That way you will have identified what they know, and what they’d like to know.

Becky White of Mutual Mobile talks about a sample exercise to help you narrow down your goals. Gather your team together and hand out sticky notes. Then, have everyone write down questions they have about their users and the UX. Collect all the questions and stick them to a board.

Finally, try to organize all the questions based on similarity. You’ll see that certain categories will have more questions than others — these will likely become your testing objectives.

As discussed in The Guide to Usability Testing, it also helps to make sure your testing objectives are as simple as possible. Your objectives should be simple like “Can visitors find the information they need?” instead of complex objectives like “Can visitors easily find our products and make an informed purchase decision?”

If you think using usability testing questions as a means to set your goals, Userium offers this helpful website usability checklist. If you notice you’re lacking in one or more categories, those are where collecting data would be most helpful (and are good talking points if your team gets stuck during the initial Q&A).

2. Knowing What to Measure

Now that you know your goals, it’s time to figure out how to apply usability testing to accomplish them. Essentially, you’re clarifying the greater scope of your testing.

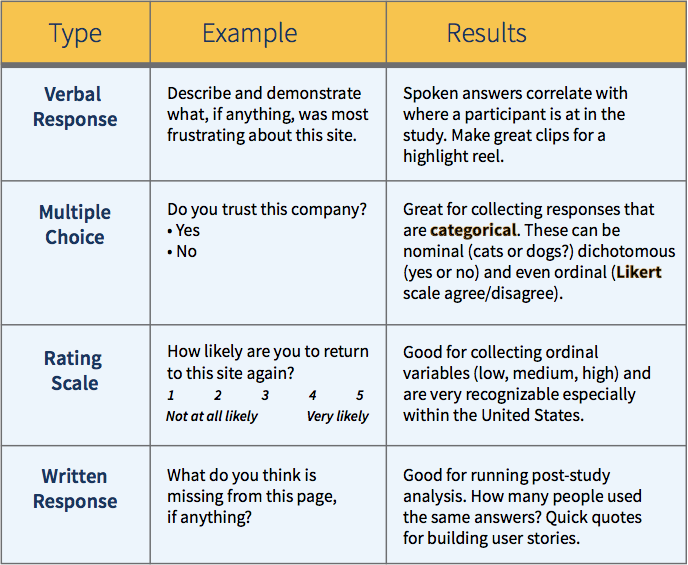

The UserTesting e-book about usability testing suggests that you must first understand what type of feedback would be most helpful for your results. Does your team need a graph or a rating scale? Personal user accounts or numbers? Written responses or sound bites?

The people who will read the data can impact the best type to collect: skeptical stakeholders might be convinced by the cold, hard numbers of a graphed quantitative rating scale, while the CEO might be made to understand a problem if he saw a video clip of users failing at a certain task.

This is why knowing your usability goals first is so important. If you don’t know the overall goals and objectives, then you certainly don’t know what type of feedback and data you need. This chart below should help give you an example of how the type of data affects the type of testing.

Once you know your goals and what type of data you’re looking for, it’s time to begin planning the actual tests. But before we get into that, let’s talk a little about metrics.

Usability Metrics

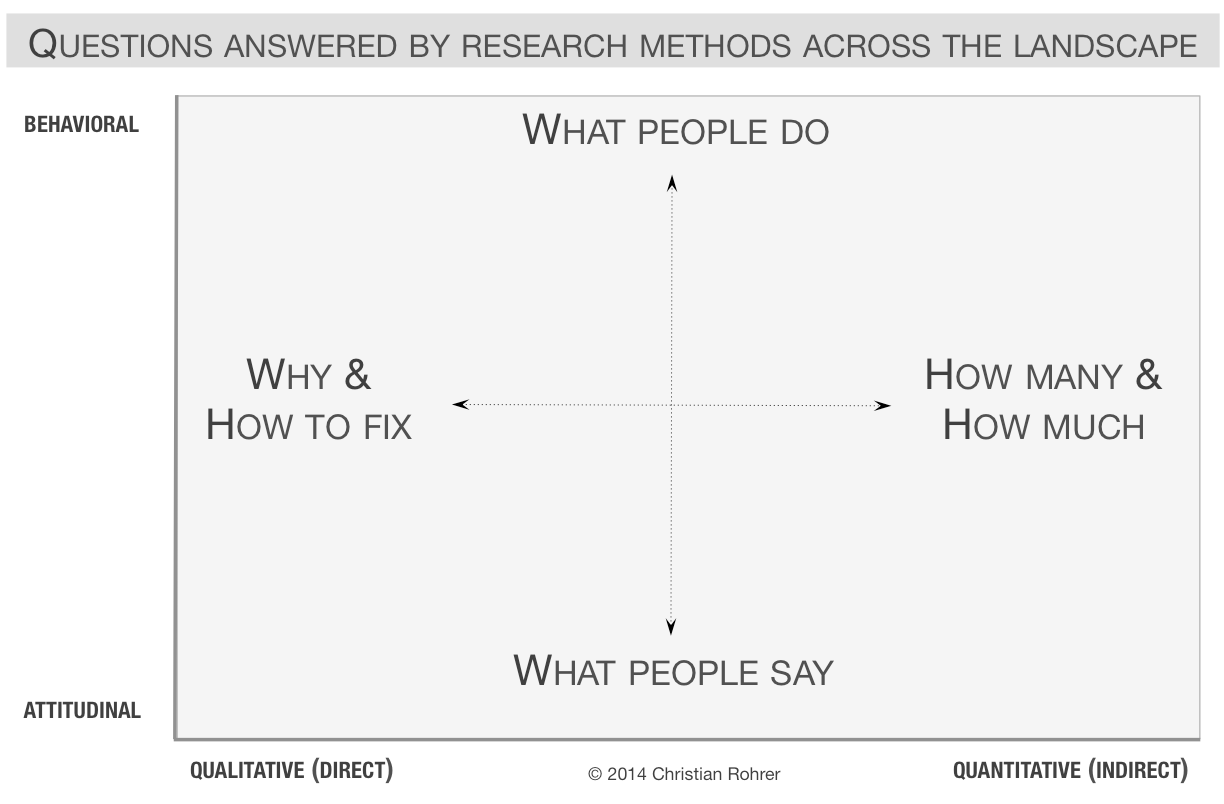

Metrics are the quantitative data surrounding usability, as opposed to more qualitative research like the verbal responses and written responses we described above. When you combine qualitative with quantitative data gathering, you get an idea of why and how to fix problems, as well as how many usability issues need to be resolved.

You can see how this plays out in the below diagram from a piece on quantitative versus qualitative data.

In a nutshell, usability metrics are the statistics measuring a user’s performance on a given set of tasks. Usability.gov lists some of the most helpful focuses for quantitative data gathering:

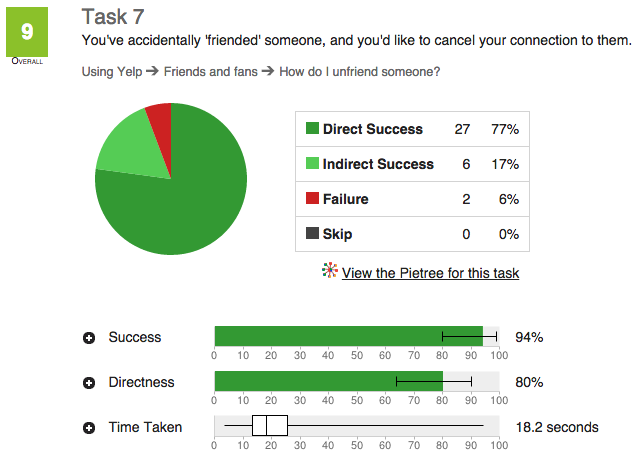

- Success Rate — In a given scenario, was the user able to complete the assigned task? When we tested 35 users for a redesign of the Yelp website, this was one of the most important bottom-line metrics.

- Error Rate — Which errors tripped up users most? These can be divided into two types: critical and noncritical. Critical errors will prevent a user from completing a task, while noncritical errors will simply lower the efficiency with which they complete it.

- Time to Completion — How much time did it take the user to complete the task? This can be particularly useful when determining how your product compares with your competitors (if you’re testing both).

- Subjective Measures — Numerically rank a user’s self-determined satisfaction, ease-of-use, availability of information, etc. Surprisingly, you can actually quantify qualitative feedback by boiling this down to the Single Ease Question.

In a general overview of metrics, Jakob Nielsen, co-founder of the Nielsen Norman Group and usability expert, states plainly, “It is easy to specify usability metrics, but hard to collect them.” Because gathering usability metrics can be difficult, time-consuming, and/or expensive, a lot of small-budget companies shy away from them even though they could prove useful.

So, are metrics a worthwhile investment for you? Nielsen lists several situations in particular where metrics are the most useful:

-

Tracking progress between releases — Did your newest update hit the mark? The metrics will show you if you’ve solved your past problems or still need to tweak your design.

-

Assessing competitive position — Metrics are an ideal way to determine precisely how you stack up next to your competition. The numbers don’t lie.

-

Stop/Go decision before launch — Is your product ready for launch? Having a numeric goal in mind will let you know exactly when you’re ready to release.

Usability metrics are always helpful, but can be a costly investment since you need to test more people for statistical significance (which the Guide to Usability Testing explores in more detail by looking at quantitative and qualitative methods).

If you plan on gathering quantitative data, make sure you collect qualitative data so you have a system of checks-and-balances, otherwise you run the risk of numbers fetishism. You can actually see how this risk could play out in the real world in a clever explanation of margarine causing divorce by Hannah Alvarez of UserTesting.

The Takeaway

In some ways, the planning phase is the most important in usability research. When it’s done correctly, with patience and thought, you data will be accurate and most beneficial.

But if the initial planning is glossed over — or even ignored — your data will suffer and call into question the value of the whole endeavor. Take to heart the items we’ve discussed, and don’t move forward until you’re completely confident in your objectives and how to achieve them.

For explanations and practical tips for 20 different types of usability tests, check out free 109-page Guide to Usability Testing. Best practices are included from companies like Apple, Buffer, DirecTV, and others.

Frequently Asked Questions (FAQs) about Usability Testing Goals

What are the key elements to consider when setting usability testing goals?

When setting usability testing goals, it’s crucial to consider the purpose of the test, the target audience, and the specific tasks or features you want to evaluate. The goals should be specific, measurable, achievable, relevant, and time-bound (SMART). They should also align with the overall objectives of the product or service being tested.

How can I ensure my usability testing goals are realistic and achievable?

To ensure your usability testing goals are realistic and achievable, it’s important to have a clear understanding of your product’s capabilities and limitations. This involves conducting a thorough analysis of your product, including its features, functionalities, and potential areas of improvement. Additionally, consider the resources available for the test, including time, budget, and personnel.

What is the role of the target audience in setting usability testing goals?

The target audience plays a crucial role in setting usability testing goals. Understanding their needs, preferences, and behaviors can help you define what aspects of the product to focus on during the test. It can also guide you in creating realistic user scenarios and tasks for the test.

How can I measure the success of my usability testing goals?

The success of usability testing goals can be measured using various metrics, such as task completion rates, error rates, satisfaction scores, and time taken to complete tasks. These metrics provide quantitative data that can be used to evaluate the usability of the product and identify areas for improvement.

How can I align my usability testing goals with the overall objectives of the product?

Aligning usability testing goals with the overall objectives of the product involves understanding the product’s purpose, its intended users, and the problems it aims to solve. The testing goals should reflect these aspects and aim to evaluate whether the product effectively meets its objectives.

What are some common mistakes to avoid when setting usability testing goals?

Common mistakes to avoid when setting usability testing goals include setting vague or overly ambitious goals, neglecting to consider the target audience, and failing to align the testing goals with the product’s overall objectives. It’s also important to avoid focusing solely on negative feedback and overlooking positive user experiences.

How often should I revisit and revise my usability testing goals?

Usability testing goals should be revisited and revised regularly to ensure they remain relevant and effective. This could be after each testing cycle, or whenever significant changes are made to the product or its objectives.

How can I involve my team in setting usability testing goals?

Involving your team in setting usability testing goals can be done through brainstorming sessions, workshops, or regular meetings. This allows for a diverse range of perspectives and ideas, which can lead to more comprehensive and effective testing goals.

Can usability testing goals change during the testing process?

Yes, usability testing goals can change during the testing process. This could be due to new insights gained from the tests, changes in the product, or changes in the target audience or market conditions. It’s important to remain flexible and adaptable throughout the testing process.

How can I ensure my usability testing goals are focused and specific?

To ensure your usability testing goals are focused and specific, it’s important to clearly define what you want to achieve from the test. This could involve identifying specific tasks or features to evaluate, defining the target audience, and setting measurable success criteria.

Jerry Cao is a UX content strategist at UXPin – the wireframing and prototyping app . His Interaction Design Best Practices: Volume 1 ebook contains visual case studies of IxD from top companies like Google, Yahoo, AirBnB, and 30 others.

Published in

·Accessibility·Bootstrap·Design·Design & UX·HTML & CSS·Patterns & Practices·UX·February 12, 2018