Key Takeaways

- The AdWords Campaign Experiments (ACE) tool allows for low-risk testing of different variables in your PPC campaign, such as bids, keywords, and ad groups, to improve metrics like click-through and conversion rates.

- A/B testing with the ACE tool can be set up by selecting an existing campaign, configuring its settings, and deciding on a 50:50 split or a smaller test group depending on the size of your data set.

- Monitoring of A/B tests should be done a couple of times a week for at least a few weeks to allow for statistically relevant differences to emerge, and results should be acted upon by applying successful experiment settings to the rest of the campaign.

- The ACE tool can be used to test a variety of elements in your AdWords campaign, from increasing bids and trying out new keywords, to experimenting with different ad copy and even gauging demand for a new product or service.

Ever been unable to decide between two different sets of ad copy? Or two different landing pages?

You’re probably aware of the benefits of A/B testing in activities like email campaigns, but did you know that you can also have your PPC cake and eat it too with the Google AdWords A/B testing tool?

Formally known as AdWords Campaign Experiments, or ACE, this tool is one of the most sure-fire ways to improve key metrics like click throughs and and conversion rates.

By using the ACE tool to run your A/B tests, you’re putting yourself in a win-win situation where you can test even the most radical ideas in a low-risk environment.

And since A/B testing for AdWords is relatively unused, mastering this tool will also put you a step ahead of the competition.

Sold? Good. Now let’s learn how to set up A/B tests for Google AdWords. To do this, you’ll need some basic knowledge of AdWords and an account with a few campaigns already set up.

Getting started

As with any kind of experiment, when you’re A/B testing, it’s imperative to define clear goals before you begin. The ACE tool allows you to change three different variables in your test: bids, keywords and ad groups. Give some thought to what exactly you are looking to compare, and what you aim to achieve by doing so.

Perhaps you are looking to increase conversions and want to see if increasing your bid can have this effect. Or maybe you’d like to try out a new keyword that is too risky to bet heavily on. Perhaps all you want to do is decide between two sets of very similar ad copy.

Not sure where to start? I’m including a handy list of things to test at the bottom of this article.

Configuring your test campaign

The first step is to select an existing campaign you want to test. To enable A/B testing on this campaign, you’ll need to configure its settings properly.

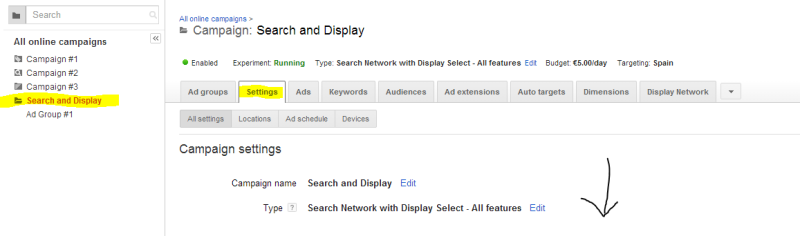

Go to the Settings tab, and scroll down to the Experiments section at the bottom of the page. Fill in any information you’d like about the experiments and continue. Bear in mind that this will need to be a search-and-display type campaign with all features enabled in order for experiments to be available. Otherwise, you simply won’t be able to access the option.

Save your changes before moving on to the next step.

50:50 A/B split or a smaller test group?

How you divide your impressions really depends on the size of your data set. If you’re lucky enough to have a high-traffic campaign with lots of conversions, you might choose to only section off a small segment of your campaign for testing to reduce risk.

On the other hand, if you’re working from a smaller data set, or if you are working with two options that have equal risk, such as nearly identical sets of ad copy, you may find it difficult to draw conclusions from anything but a 50:50 split.

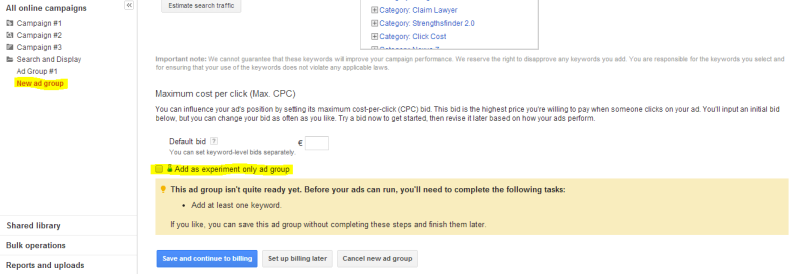

Head over to the Ad Groups tab in your campaign, and include the same parameters as you would if you were creating a normal ad group for your campaign: title, keywords, ad copy, default bid and so on. The only difference here is that you will need to make sure you check the box which says **Add as experiment only ad group”.

Voilà! You’ll now see that your ad is marked by Google’s experiment symbol when it is shown on your dashboard:

Toggle this to decide which parts of the ad group you will set as the control, and which you want to experiment with.

Monitoring your experiment

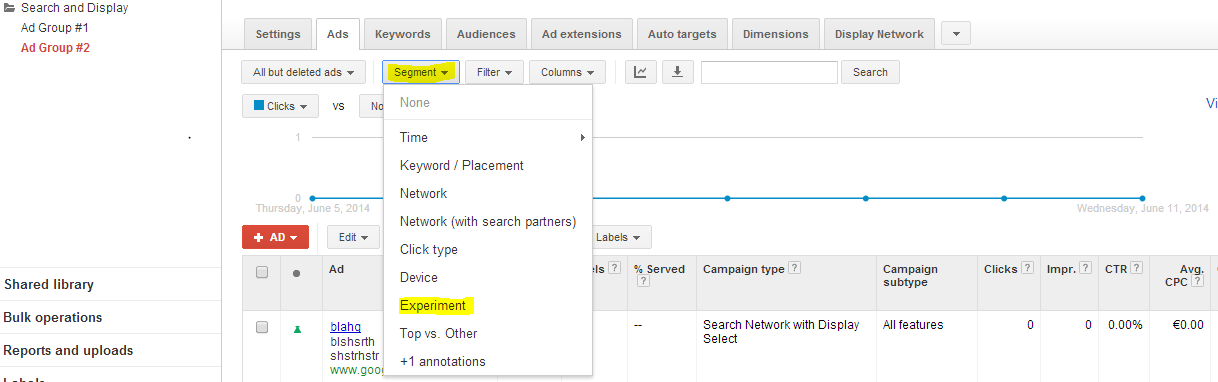

Once your test has been running for a couple of days, you’ll want to start monitoring the differences between your experiment and control group. Go to Campaign > Segments > Experiments, and you’ll see both groups appear.

Statistically relevant differences are marked with gray arrows. One arrow means Google is 95 percent certain the difference in results obtained is relevant and not due to chance. Two means 99 percent certainty, and three means 99.9% certainty. (Presumably, certainty is calculated using standard deviation from the mean or something similar.)

Suffering from compulsive account-checking at this point? We’ve all been there. But if you find you’re spending your days poised over the refresh button, you should bear in mind that fluctuating results can give you false impressions. Try to refrain from checking your experiments more than a couple of times a week, and leave them to brew for at least a couple of weeks (depending on the size of your data set, a couple of months is probably better) before drawing any conclusions. This is especially true if you’re running campaigns over unpredictable periods like holidays.

If you find that it’s hard to resist checking your account, set up a scheduled email report so you can receive the information you need on the schedule you choose and then leave your account alone. To do this, click on the Download report button and select the frequency you require.

Acting on your results

Has your experiment been a success? Congratulations. Now it’s time to apply the experiment settings to the rest of your campaign.

Go back to the Settings menu for your campaign, and scroll down to where you originally enabled experimentation. You’ll see that you are presented with three options: Stop running experiment, Apply: Launch changes fully and Delete: Remove changes.

Bear in mind that by applying changes will remove your original settings permanently. If you’re not quite sure whether or not it would be wise to make the switch, it could be worth running a follow-up experiment with parameters set to 90 percent experiment and 10 percent control to make sure.

Ideas for AdWords A/B tests

Ready to get cracking with the ACE tool? As promised, I’m going to end this article with a list of ideas to try out. These are just a few suggestions–the possibilities are endless.

Bids

- Does increasing your bid lead to more conversions? If so, is this worth the cost in terms of ROI?

- Does bidding aggressively on your most competitive keywords increase traffic quality? Or is it just a waste of money?

- What happens when you place high bids on a really specific keyword?

Keywords

- What happens when you target that near-synonym that you’re not quite sure will work? If you’ve never had the guts to try, the ACE tool lets you experiment safely.

- Have you always gone for the long tail? Try a really short, obvious keyword to see what happens.

- Likewise, this is a good opportunity to try a more specific keyword if you normally use relatively generic ones.

Ad Copy

- It’s said that calls to action which use the word “get” are more powerful. Test this theory!

- Google recommends capitalizing the first letter of each word in order to make copy stand out. Does this really have a positive impact on CTR? Time to find out.

- If you’ve never tried dynamically updated text, why not do so now.

Bonus test

- Has your company been thinking about launching a new product or service? If you already have distribution networks set up, using the ACE tool to measure demand for the new product or service in comparison to existing ones is a great way to do some quick market research. Just make sure you have a solid success/failure plan ready!

Easy (and safe) experiments

Google’s ACE tool is easy to use–but more importantly, it gets results.

As always with new techniques, the hardest part is breaking out of your comfort zone and trying them out for the very first time.

The mark of a successful AdWords practitioner, however, is their ability to be at the forefront of new developments by making the use of any new tools available.

Have you tried A/B testing with the ACE tool? How’d it go? Let us know in the comments.

Frequently Asked Questions (FAQs) about Improving Click Rates with AdWords A/B Testing

What is the significance of A/B testing in Google AdWords?

A/B testing, also known as split testing, is a method of comparing two versions of an ad to determine which one performs better. It’s a way to test different variables in your ads to see which version generates more clicks or conversions. This can help you optimize your ads for better performance, ultimately leading to higher return on investment (ROI).

How can I set up an A/B test in Google AdWords?

Setting up an A/B test in Google AdWords involves creating two versions of an ad with one key difference, such as the headline or call to action. These ads are then shown to similar audiences, and the performance of each ad is tracked to determine which version is more effective.

What factors should I consider when conducting an A/B test?

When conducting an A/B test, it’s important to only test one variable at a time to ensure that any differences in performance can be attributed to that specific change. You should also make sure to run the test for a sufficient amount of time to collect enough data for a reliable result.

How can I analyze the results of an A/B test?

The results of an A/B test can be analyzed by comparing the click-through rates, conversion rates, and other key performance indicators (KPIs) of the two versions of the ad. The version that performs better on these metrics is the one that should be used moving forward.

Can I conduct A/B testing on all types of Google Ads?

Yes, A/B testing can be conducted on all types of Google Ads, including search ads, display ads, and video ads. However, the process and variables to test may vary depending on the type of ad.

What are some common mistakes to avoid when conducting A/B testing?

Some common mistakes to avoid when conducting A/B testing include testing too many variables at once, not running the test for a sufficient amount of time, and not using a large enough sample size. These mistakes can lead to unreliable results.

How can A/B testing improve my click-through rate (CTR)?

A/B testing can help improve your CTR by allowing you to test different elements of your ads to see which ones resonate most with your audience. By optimizing your ads based on the results of these tests, you can increase the likelihood that users will click on your ads.

Can A/B testing help reduce my cost per click (CPC)?

Yes, by improving the relevance and effectiveness of your ads through A/B testing, you can potentially reduce your CPC. This is because Google rewards advertisers who create high-quality, relevant ads with lower costs per click.

How often should I conduct A/B testing?

The frequency of A/B testing can depend on various factors, such as the size of your audience and the performance of your ads. However, it’s generally a good practice to conduct A/B testing on a regular basis to continuously optimize your ads.

Can I use A/B testing for other aspects of my digital marketing strategy?

Absolutely! A/B testing is a versatile tool that can be used to optimize various aspects of your digital marketing strategy, including your website design, email marketing campaigns, and social media ads.