Making the Most of Google Webmaster Tools

Key Takeaways

- Google and Bing offer webmaster dashboards that provide insights into search engine activity and can identify critical crawling, indexing, and ranking issues with your site(s).

- Google Webmaster Tools dashboard is broken down into four sections: Your Site on the Web, Diagnostics, Site Configuration, and Labs, each providing specific data and insights.

- Registering your site with Google Webmaster Tools can help you understand how both people and search engines find and navigate to your content, and monitor issues that the search engine may encounter when crawling your site.

- Google Webmaster Tools also allows you to inform Google of your sitemap location, monitor the robots.txt file for your site, adjust the suggested crawl rate, and request URL removal from the index.

- Google Webmaster Tools’ Labs section offers experimental new features, such as reporting on how a specific page is fetched by Google’s spider or graphing the time a page takes to load.

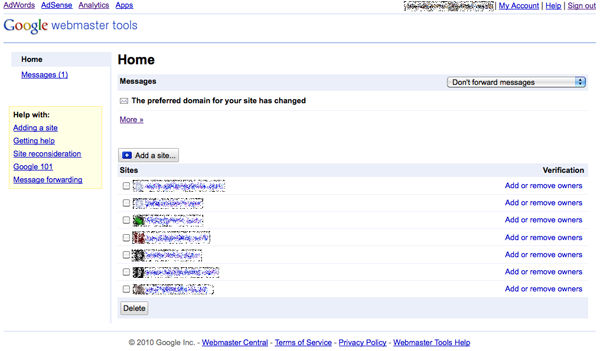

This article is an excerpt from our latest release, The SEO Business Guide. The entire chapter from which the article is drawn, along with two other chapters, is available as a free PDF download. It’s well worth checking out if you’re interested in learning more about conducting effective and best-practice SEO.Both Google and Bing offer a webmaster dashboard that gives insights into activity by the search engine on any site that has been registered and verified via the dashboard.[1]These dashboards present a number of tools and insights to data unable to be gleaned by any other method. They provide the only way to gain an understanding of how the search engines “see” your site, and are the only way to identify critical crawling, indexing, and ranking issues with your site(s).It’s important to note that the search engines make no claim to giving full, complete, or accurate data on what they report. You should only use these tools with this in mind, and in conjunction with the other methods you use to track search and site performance, such as site analytics and the SERPs (Search Engine Results Pages) themselves.A significant benefit for those who manage more than one site, is that you can add multiple websites to each dashboard; this allows you to remain signed in and switch between your portfolio of sites to view the data. A Google Webmaster Tools account with an assortment of sites is shown in Figure 1, “The Google Webmaster Tools home screen”.

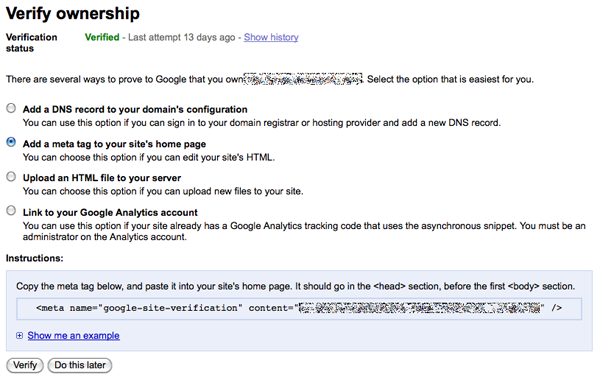

Before you can even start to look at the data the search engines have about your site, you must first register the site with them, and verify ownership. This is normally done by either uploading a small file to the root of your web server, or adding a snippet of code on your home page.Google also allows you to verify a site by adding a DNS (domain name server) record to the domain configuration, or by linking with a Google Analytics administrator account. You can see all the available options for verification in Figure 2, “Webmaster Tools verification”.Google’s Webmaster Tools can be found at https://www.google.com/webmasters/tools/, and Bing’s at http://www.bing.com/webmaster/.

The primary task of webmaster tools is to provide information on crawling and indexing. One aspect of search that they provide little information on is ranking.Since Google’s Webmaster Tools is the reigning leader in this segment, we’ll concentrate on its tool set for the purposes of thisexplanation, but Bing’s dashboard offers very similar insights.So, what’s gained by registering your site with webmaster tools? Google’s Webmaster Tools dashboard is broken down into four sections, so we’ll summarize what each of them contains.

This area provides data relating to how both people and search engines find and navigate to your content (links and keywords).

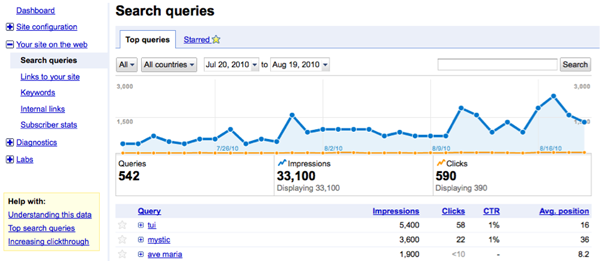

- Search queries This lists the most popular searches for which your site appeared in Google results, as well as impressions and clicks that were made from those results to your site. Recent improvements in this section include the ability to see this represented in a graph over time, with the average position in the rankings, and specific information on each keyword or phrase, as shown in Figure 3, “Search queries graphed over time”.

-

How to use: Alongside your analytics metrics and AdWords (if applicable) to identify what terms are driving the most traffic to your site. It will show you whether you’re targeting the right terms, and the CTR (clickthrough rate) will indicate whether your result is performing as it should. - Links to your site.

- This shows what pages have the most backlinks, and allows you to view the sites/pages that contain the link and what anchor text was used.

- How to use: While most backlinks will be to your home page, you might be unaware of what terms othersites are using to link to you. This will have a direct impact on what terms your site will rank for; identifying the volume of backlinks you have for each of your pages will give you the ability to measure your link-building efforts.

- Keywords

- This lists the most common keywords found when crawling your site, and the frequency of those terms. The data indicates which keywords Google believes your site to be about, based on their presence in your content. How to use: This will reveal if your site has been compromised—if a spam word is showing—or if any words in your content are gaining unwanted prominence. Conversely, if particular words you’re targeting aren’t showing as expected, then you can take steps to increase their prominence. Internal links

- This shows how you link to your own content, by counting and displaying which of your pages link to other pageswithin your site.How to use: Deeper content is often harder for search engines to find. If the targeted page is showing a low number of internal links, you can take steps to add additional ones.

This area outlines issues that the search engine may have encountered when crawling your site.

- Crawl errors

- This shows what pages caused crawling issues: from page not found to server errors that were encountered, as well as what page linked to the error-causing page, and when it occurred. How to use: Server errors such as 500 and 503 should be immediately tested to see if they’re still occurring, as they normally only show when your server is unavailable or having issues. 404 (page not found) errors are often caused by the page’s address having been changed without any redirection applied. You should be aiming to have almost no 404 results; in those cases where they’re inevitable (for example, external sites might—for reasons unknown—link to a page that doesn’t exist), your 404 page should be designed to ensure that visitors stay on your site. You can do this by including elements such as a site search or links to popular content.

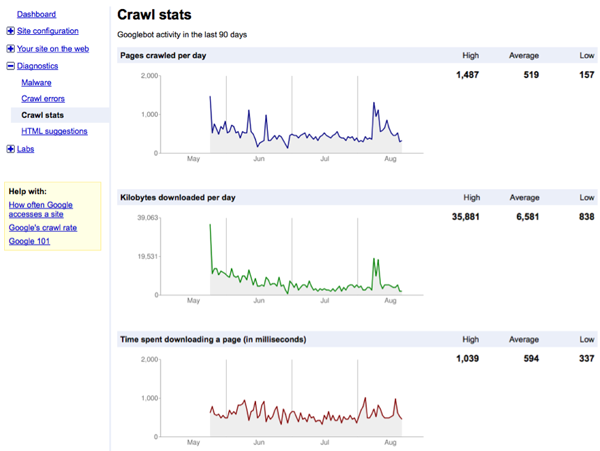

- Crawl stats

- This displays three handy graphs, shown in Figure 4, “Crawling statistics”: how many pages were crawled per day, the amount of data downloaded, and the time spent downloading.

How to use: It’s important to monitor these graphs, as they indicate activity over time by the search engines. Any sudden dips in the number of pages crawled or the amount of data being downloaded can indicate that recent changes to your site have had a negative impact. The time spent downloading could point to server performance issues that may needto be rectified. - HTML suggestions

- This lists issues found relating to your description

metaandtitletags, highlighting those that are missing or causing duplicate content issues. How to use: If you have reports of a large number of duplicate titles and/or meta descriptions, it means that Google is having trouble establishing a clear picture of what your pages are about. You also run the risk of search engines viewing your pages as duplicate content and filtering them from the search results. You should be using this tool to identify which pages need to have the titles and descriptions rewritten.

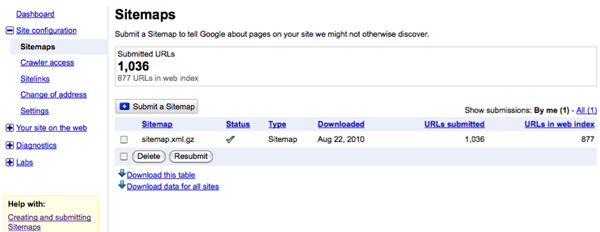

Another section of the webmaster tools is your site configuration. This let you inform Google of where your sitemap is located, monitor the robots.txt file for your site, have some control over how sitelinks—if applicable—are displayed, and also adjust the suggested crawl rate (use this last one with caution).Probably the biggest benefits to this section are the Sitemap and Crawler access functions.By submitting a sitemap—be it in XML or text-file format, or using RSS/Atom feeds—you gain the clearest picture of how much of your content has been indexed; the dashboard reports an actual figure of indexed URLs that you can compare with the number submitted in your sitemap, as shown in Figure 5, “Google Webmaster Tools’ Sitemaps functionality”. The downside is that Google Webmaster Tools is unable to tell you which URLs are indexed and which aren’t.

The Crawler access function enables you to request that a URL be removed from the index —but this does come withsome strict requirements.Hidden in the Settings section of the Site configuration section is the Parameter Handling functionality. This allows youto restrict crawler access to troublesome query-string parameters that may be giving you duplicate content problems, or are meant to provide specific functionality that you don’t want the search engines to be interested in; for example, print versions of pages.

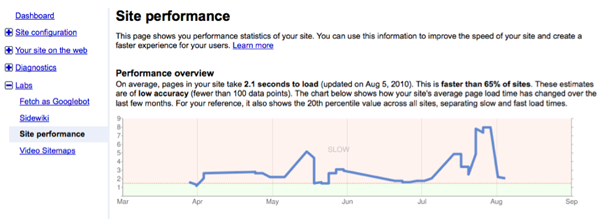

While these are the core staple reports, Google Webmaster Tools also offers a Labs section where experimental new features are available. For instance, you can report on how a specific page is fetched by Google’s spider (known as Googlebot), or graph the time a page takes to load—as Figure 6, “Site performance overview” shows.

Webmaster tools provided by the search engines offer data and insights not possible through any other third-party method, and when tied together with your site’s analytics tools (and any rank-checking and tracking tools you might be using), you’ll have the broadest picture of your site’s performance in the search engines. We have only scratched the surface on this topic; there is a huge amount of information provided by the engines themselves, and both Google and Bing offer a community forum where you can ask questions not covered in the support resources.This article was drawn from our latest release, The SEO Business Guide. The whole of Chapter 2,Best-practice SEO, which includes the content in this article, is available as a free PDF download along with two other chapters. If you like what youread here, be sure to check it out!

Frequently Asked Questions (FAQs) about Google Webmaster Tools

What are the key features of Google Webmaster Tools?

Google Webmaster Tools, also known as Google Search Console, is a free service offered by Google that helps you monitor, maintain, and troubleshoot your site’s presence in Google Search results. It offers a variety of features such as search analytics, URL inspection, and sitemap submission. These tools help you understand how Google views your site, optimize your site’s performance in search results, and identify and fix issues.

How can I set up Google Webmaster Tools for my website?

Setting up Google Webmaster Tools is a straightforward process. First, you need to sign in to your Google account. Then, go to Google Search Console and add a new property (your website). You’ll need to verify your website ownership through several methods like HTML file upload, domain name provider, or Google Analytics. Once verified, you can start using the various tools and reports available.

How can Google Webmaster Tools help improve my website’s SEO?

Google Webmaster Tools provides valuable insights into how your website is performing in search results. It shows which keywords your site is ranking for, how often your site appears in search results, and how many people click through to your site. This data can help you optimize your content and improve your SEO strategy. Additionally, it alerts you to any issues that might affect your site’s visibility in search results, such as crawl errors or penalties.

What is the URL Inspection tool in Google Webmaster Tools?

The URL Inspection tool is a feature in Google Webmaster Tools that provides information about Google’s indexed version of a specific page. It shows the last crawl date, the status of that crawl, any indexing errors, and the canonical URL for that page. This tool can be particularly useful for identifying and fixing any issues that might prevent a page from appearing in search results.

How can I submit a sitemap to Google using Webmaster Tools?

Submitting a sitemap to Google via Webmaster Tools is a simple process. In the Search Console, select your website. On the dashboard, click on ‘Sitemaps’. Enter the URL of your sitemap and click ‘Submit’. Google will then crawl your sitemap and use it to improve its crawling of your site.

What is the role of the ‘Coverage’ report in Google Webmaster Tools?

The ‘Coverage’ report in Google Webmaster Tools shows the indexing state of all URLs that Google has visited, or tried to visit, in your site. This report can help you identify any pages that were not indexed because of errors, and provides information on how to fix these errors.

How can I use Google Webmaster Tools to monitor backlinks?

Google Webmaster Tools provides a ‘Links’ report that shows you all the sites that link to your website. This can help you understand which sites are linking to you, what content they’re linking to, and how your site’s link profile is changing over time.

Can Google Webmaster Tools help me identify security issues on my site?

Yes, Google Webmaster Tools can help identify security issues. The ‘Security & Manual Actions’ section alerts you to any security issues detected by Google, such as hacking or malware. It also shows any manual actions taken by Google against your site, which can affect your site’s visibility in search results.

What is the ‘Mobile Usability’ report in Google Webmaster Tools?

The ‘Mobile Usability’ report in Google Webmaster Tools shows any issues that might affect a user’s experience on mobile devices. It identifies pages that aren’t mobile-friendly and provides recommendations on how to fix these issues.

Can I use Google Webmaster Tools to monitor site speed?

Yes, Google Webmaster Tools provides a ‘Speed’ report that shows how quickly your site loads on both desktop and mobile devices. This report can help you identify any issues that might be slowing down your site and provides recommendations on how to improve site speed.

Mike Hudson is the in-house web search strategy manager (SEO/SEM) for realestate.com.au. From its head office in Melbourne, he oversees the continuing growth of the company across the network of more than a dozen websites operating in Australia, Europe, and Asia. He is an avid amateur photographer, publishing his images on his personal website at http://seriocomic.com/.