Not so long ago, many of us were satisfied handling deployment of our projects by uploading files via FTP to a web server. I was doing it myself until relatively recently and still do on occasion (don’t tell anyone!). At some point in the past few years, demand for the services and features offered by web applications rose, team sizes grew and rapid iteration became the norm. The old methods for deploying became unstable, unreliable and (generally) untrusted.

So was born a new wave of tools, services and workflows designed to simplify the process of deploying complex web applications, along with a plethora of accompanying commercial services. Generally, they offer an integrated toolset for version control, hosting, performance and security at a competitive price.

Platform.sh is a newer player on the market, built by the team at Commerce Guys, who are better known for their Drupal eCommerce solutions. Initially, the service only supported Drupal based hosting and deployment, but it has rapidly added support for Symfony, WordPress, Zend and ‘pure’ PHP, with node.js, Python and Ruby coming soon.

It follows the microservice architecture concept and offers an increasing amount of server, performance and profiling options to add and remove from your application stack with ease.

I tend to find these services make far more sense with a simple example. I will use a Drupal platform as it’s what I’m most familiar with.

Platform.sh has a couple of requirements that vary for each platform. In Drupal’s case they are:

- An id_rsa public/private key pair

- Git

- Composer

- The Platform.sh CLI

- Drush

I won’t cover installing these here; more details can be found in the Platform.sh documentation section.

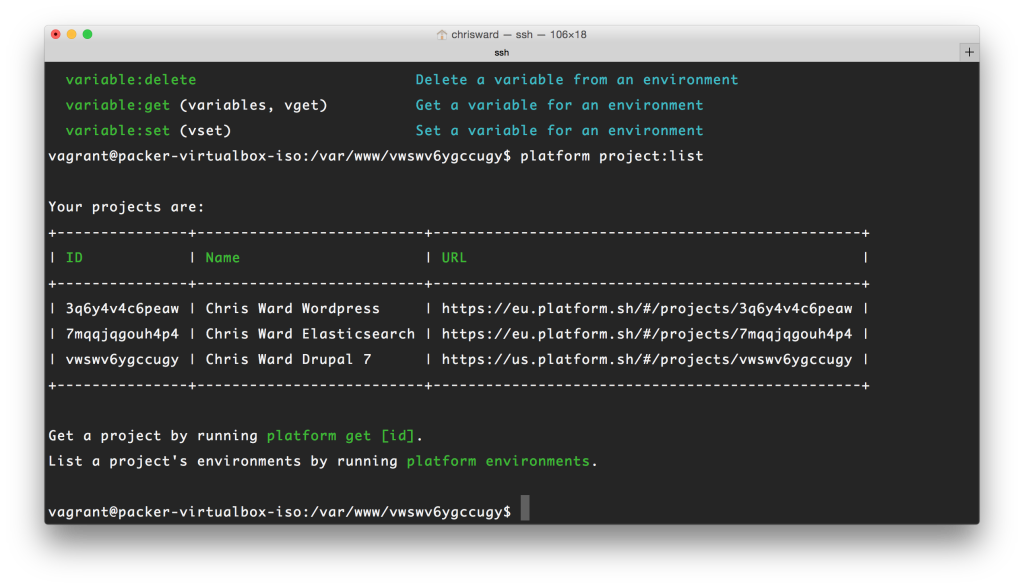

I had a couple of test platforms created for me by the Platform.sh team, and for the sake of this example, we can treat these as my workplace adding me to some new projects I need to work on. I can see these listed by issuing the platform project:list command inside my preferred working directory.

Get a local copy of a platform by using the platform get ID command (The IDs are listed in the table we saw above).

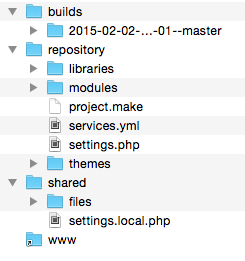

This will download the relevant code base and perform some build tasks, any extra information you need to know is presented in the terminal window. Once this is complete, you will have the following folder structure:

The repository folder is your code base and here is where you make and commit changes. In Drupal’s case, this is where you will add modules, themes and libraries.

The build folder contains the builds of your project, that is the combination of drupal core, plus any changes you make in the repository folder.

The shared folder contains your local settings and files/folders, relevant to just your development copy.

Last is the www symlink, which will always reference the current build. This would be the DOCROOT of your vhost or equivalent file.

Getting your site up and running

Drupal is still dependent on having a database present to get started, so if we need it we can get the database from the platform we want by issuing:

platform drush sql-dump > d7.sqlThen we can import the database into our local machine and update the credentials in shared/settings.local.php accordingly.

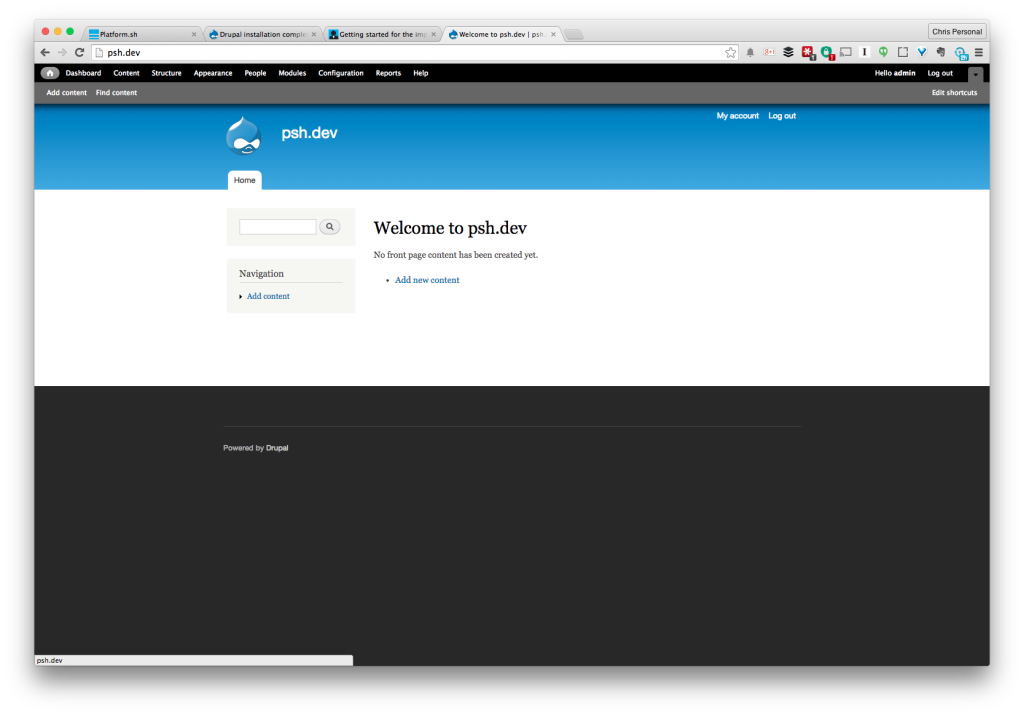

Voila! We’re up and working!

Let’s start developing

Let’s do something simple: add the views and features modules. Platform.sh is using Drush make files, so it’s a different process from what you might be used to. Open the project.make file and add the relevant entry to it. In our case, it’s:

projects[ctools][version] = "1.6"

projects[ctools][subdir] = "contrib"

projects[views][version] = "3.7"

projects[views][subdir] = "contrib"

projects[features][version] = "2.3"

projects[features][subdir] = "contrib"

projects[devel][version] = "1.5"

projects[devel][subdir] = "contrib"Here, we are setting the projects we want to include, the specific versions and what subfolder of the modules folder we want them placed into.

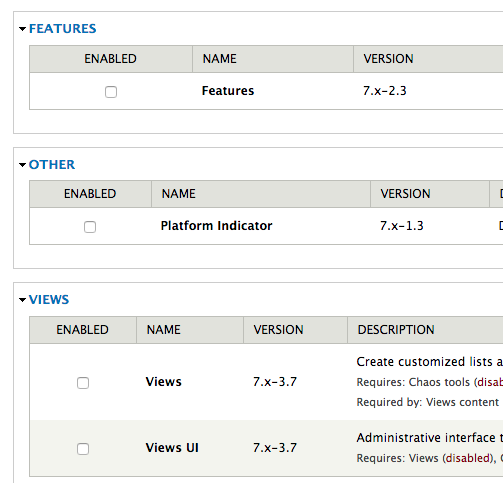

Rebuild the platform with platform build. You should notice the devel, ctools, features and views module downloaded, and we can confirm this by making a quick visit to the modules page:

You will notice that each time we issue the build command, a new version of our site is created in the builds folder. This is perfect for quickly reverting to an earlier version of our project in case something goes wrong.

Now, let’s take a typical Drupal development path, create a view and add it to a feature for sharing amongst our team. Enable all the modules we have just added and generate some dummy content with the Devel Generate feature, either through Drush or the module page.

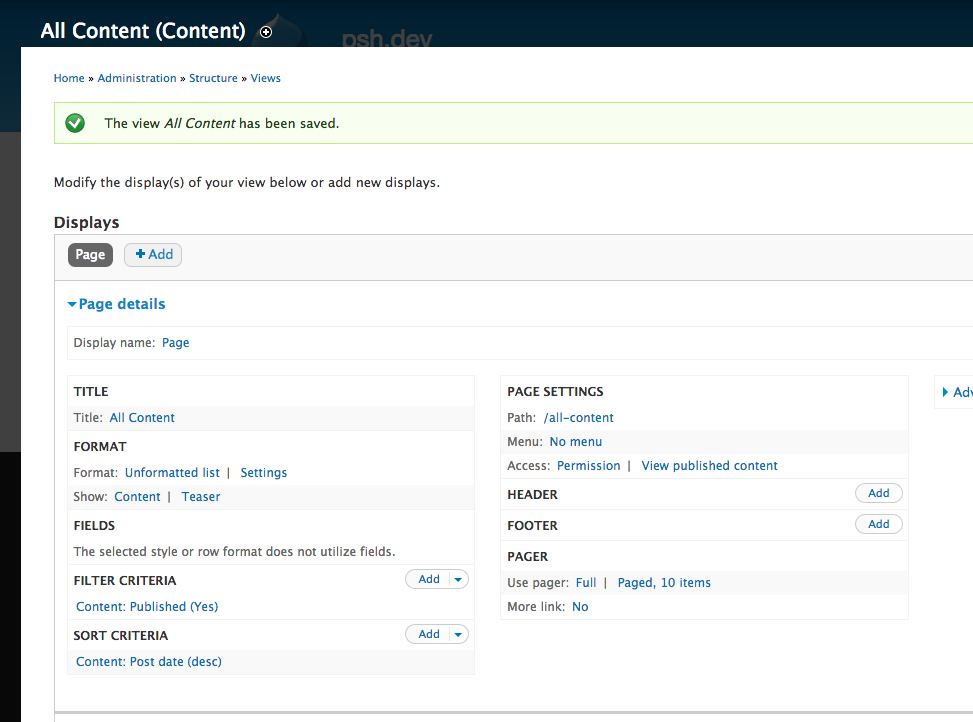

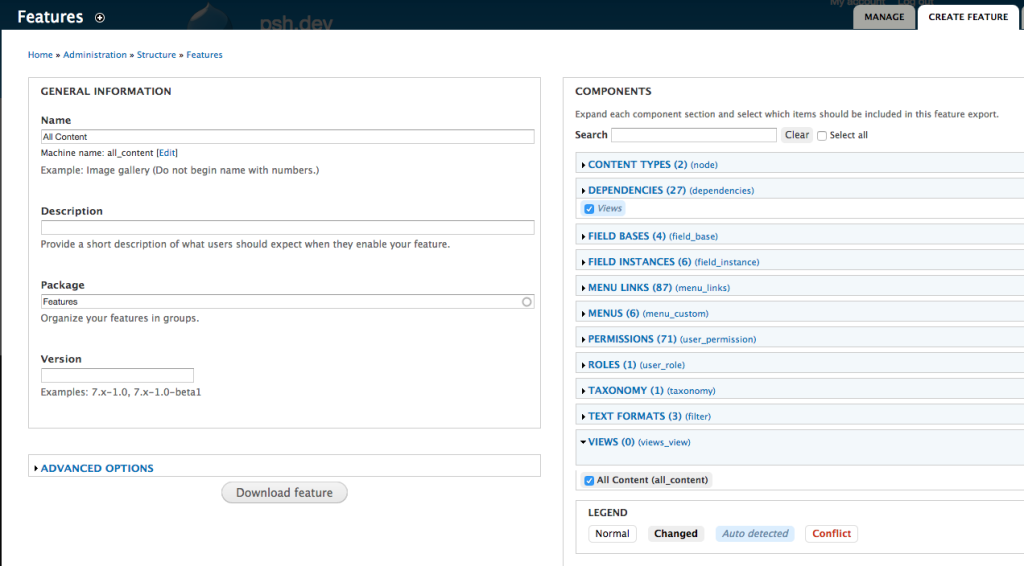

Now, create a page view that shows all content on the site:

Add it to a feature:

Uncompress the archive created and add it into the repository -> modules folder. Commit and push this folder to version control. Now any other team member running the platform build command will receive all the updates they need to get straight into work.

You can then follow your normal processes for getting modules, feature and theme changes applied to local sites such as update hooks or profile based development.

What else can Platform.sh do?

This simplifies the development process amongst teams, but what else does Platform.sh offer to make it more compelling than other similar options?

If you are an agency or freelancer that works on multiple project types, the broader CMS/Framework/Language support, all hosted in the same place and with unified version control and backups, is a compelling reason.

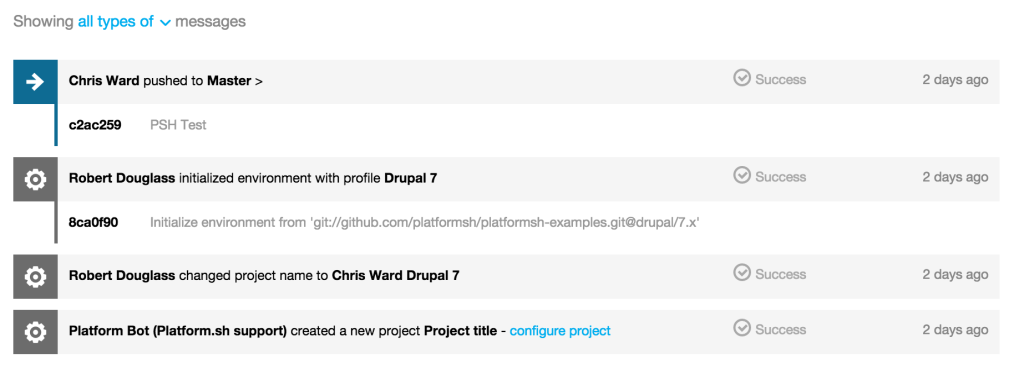

With regards to version control, platform.sh provides a visual management and record of your git commits and branches, which I always find useful for reviewing code and status of a project. Apart from this, you can create snapshots of your project, including code and database, at any point.

When you are ready to push your site live, it’s simple to allocate DNS and domains all from the project configuration pages.

Performance, Profiling and other Goodies

By default, your projects have access to integration with Redis, Solr and EntityCache / AuthCache. It’s just a case of installing the relevant Drupal modules and pointing them to the built-in server details.

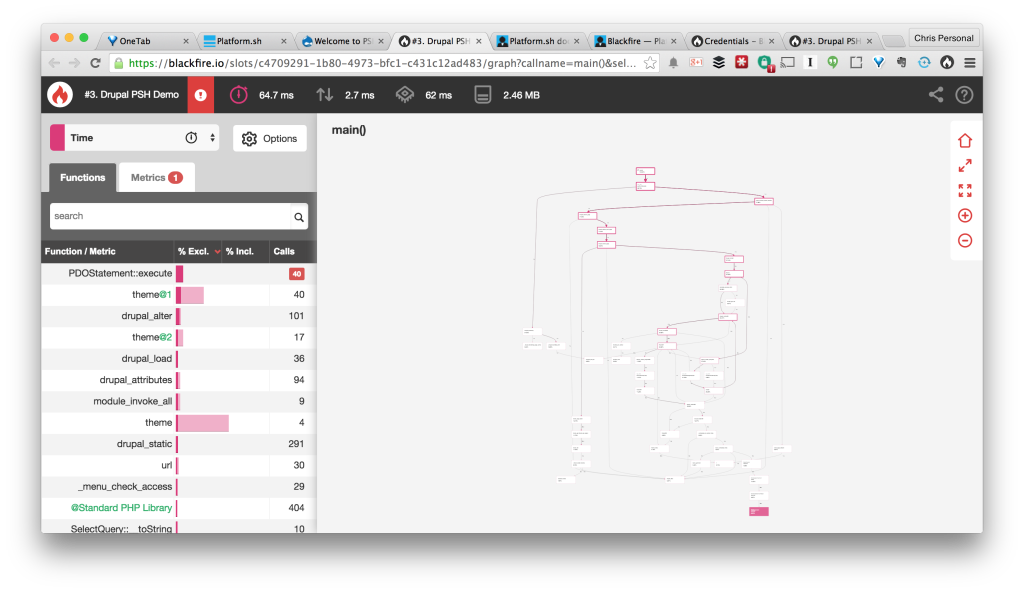

For profiling, Platform.sh has just added support for Sensiolabs Blackfire, all you need to do is install the browser companion, add your credentials, create an account and you’re good to go.

Backups are included by default as well as the ability to restore from backups.

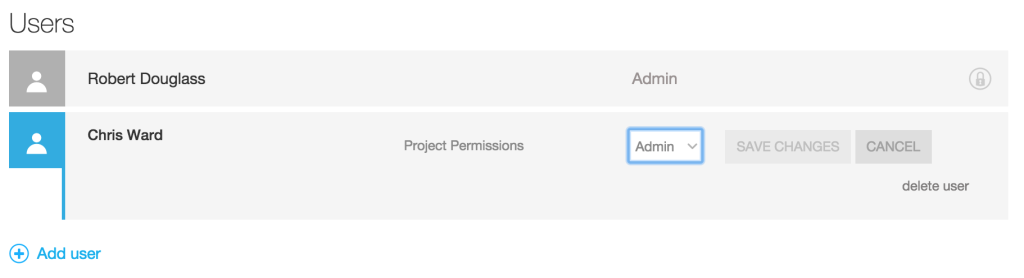

Team members can be allocated permissions at project levels and environment levels, allowing for easy transitioning of team members across projects and the roles they undertake in each one.

Platform.sh offers some compelling features over it’s closest competition (Pantheon and Acquia) and pricing is competitive. The main decision to be made with all of these SaaS offerings is if the restricted server access and ‘way of doing things’ is a help or a hindrance to your team and its workflows. I would love to know your experiences or thoughts in the comments below.

Chris Ward

Chris WardDeveloper Relations, Technical Writing and Editing, (Board) Game Design, Education, Explanation and always more to come. English/Australian living in Berlin, Herzlich Willkommen!