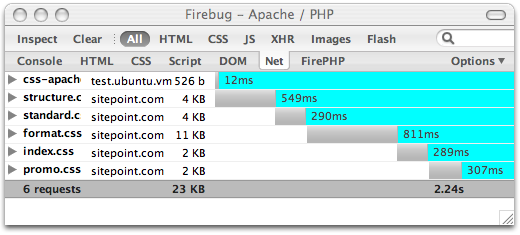

Have you ever watched your status bar while you wait for a page to load and wondered why several files seem to be downloaded before you see anything at all on your screen? Eventually the page content displays, and then the images are slotted in. The files that keep you waiting are generally the CSS and Javascript files linked to from the “head” section of the HTML document. Because these files determine how the page will be displayed, rendering is delayed until they are completely downloaded. HTTP Overhead For each of these files, an HTTP request is sent to the server, and then the browser awaits a response before requesting the next file. Limits (or limitations) of the browser generally prevent parallel downloads. This means that for each file, you wait for the request to reach the server, the server to process the request, and the reply (including the file content itself) to reach you. Put end to end, a few of these can make a big difference to page load times. Example Case As a demonstration, I’ve created an empty HTML document which loads 5 stylesheets. Browser cache is disabled, and I’ve used Firebug, an invaluable tool for any web developer, to visualise the HTTP timing information. Oh, and I’ve omitted the DOCTYPE declaration for brevity only – no real sites were harmed in the writing of this article.

<html>

<head>

<title>Apache / PHP</title>

<link rel="stylesheet" type="text/css" href="https://www.sitepoint.com/css2/structure.css" />

<link rel="stylesheet" type="text/css" href="https://www.sitepoint.com/css2/standard.css" />

<link rel="stylesheet" type="text/css" href="https://www.sitepoint.com/css2/format.css" />

<link rel="stylesheet" type="text/css" href="https://www.sitepoint.com/css2/index.css" />

<link rel="stylesheet" type="text/css" href="https://www.sitepoint.com/css2/promo.css" />

</head>

<body>

</body>

</html>

A total load time of 2.24 seconds. For an empty page.

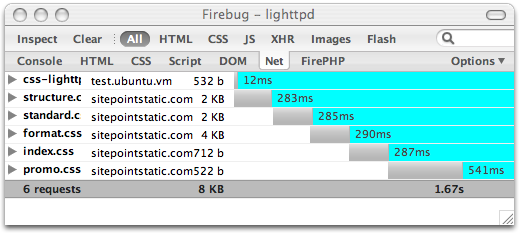

Lets see what happens if we request the same stylesheets from lighttpd, a lighter weight web server which is not being weighed down by PHP. It’s worth noting that the use of a separate domain for static content means any cookies set on the sitepoint.com domain will not be sent with the HTTP requests. Smaller requests are faster requests.

A total load time of 2.24 seconds. For an empty page.

Lets see what happens if we request the same stylesheets from lighttpd, a lighter weight web server which is not being weighed down by PHP. It’s worth noting that the use of a separate domain for static content means any cookies set on the sitepoint.com domain will not be sent with the HTTP requests. Smaller requests are faster requests.

<html>

<head>

<title>lighttpd</title>

<link rel="stylesheet" type="text/css" href="https://i2.sitepoint.com/css2/structure.css" />

<link rel="stylesheet" type="text/css" href="https://i2.sitepoint.com/css2/standard.css" />

<link rel="stylesheet" type="text/css" href="https://i2.sitepoint.com/css2/format.css" />

<link rel="stylesheet" type="text/css" href="https://i2.sitepoint.com/css2/index.css" />

<link rel="stylesheet" type="text/css" href="https://i2.sitepoint.com/css2/promo.css" />

</head>

<body>

</body>

</html>

We’ve shaved off half a second, but I suspect the bulk of the time is spent going back and forth to the server for each file. So how do we improve the load time further?

Static Content Bundling

Maintaining small, modular stylesheets can make development easier, and make it possible to deliver styles specifically to the pages that require them, reducing the total CSS served per page. But we are seeing now that there’s a price to pay in the HTTP overhead.

This is where you gain performance from server side bundling of static content. Put simply, you make a single request to the server for all the CSS files required for a page, and the server combines them into a single file before serving them up. So you still upload your small, modular stylesheets, and the server is capable of delivering any combination of them as a single file.

Using the technique described in an article at rakaz.nl (PHP script also available there), we can handle special URLs that look like this:

http://example.org/bundle/one.css,two.css,three.css

For SitePoint.com, I wrote a bundling implementation in Lua for lighttpd/mod_magnet so that we can still avoid the overhead of Apache/PHP. When a combination of files is bundled for the first time, the bundle is cached on the server so that it only needs to be regenerated if one of the source files is modified. If the browser supports compression, lighttpd/mod_compress handles the gzipping and caching of the compressed version.

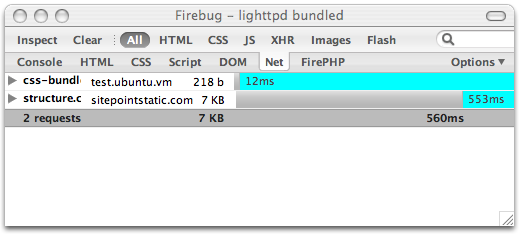

Lets check our load time now..

We’ve shaved off half a second, but I suspect the bulk of the time is spent going back and forth to the server for each file. So how do we improve the load time further?

Static Content Bundling

Maintaining small, modular stylesheets can make development easier, and make it possible to deliver styles specifically to the pages that require them, reducing the total CSS served per page. But we are seeing now that there’s a price to pay in the HTTP overhead.

This is where you gain performance from server side bundling of static content. Put simply, you make a single request to the server for all the CSS files required for a page, and the server combines them into a single file before serving them up. So you still upload your small, modular stylesheets, and the server is capable of delivering any combination of them as a single file.

Using the technique described in an article at rakaz.nl (PHP script also available there), we can handle special URLs that look like this:

http://example.org/bundle/one.css,two.css,three.css

For SitePoint.com, I wrote a bundling implementation in Lua for lighttpd/mod_magnet so that we can still avoid the overhead of Apache/PHP. When a combination of files is bundled for the first time, the bundle is cached on the server so that it only needs to be regenerated if one of the source files is modified. If the browser supports compression, lighttpd/mod_compress handles the gzipping and caching of the compressed version.

Lets check our load time now..

<html>

<head>

<title>lighttpd bundled</title>

<link rel="stylesheet" type="text/css" href="https://i2.sitepoint.com/bundle/structure.css,standard.css,format.css,index.css,promo.css" />

</head>

<body>

</body>

</html>

The Final Result

560ms! That’s 1.6 seconds quicker than the original example.

This bundling technique works for CSS and also JavaScript.. but obviously you cannot mix the two in a single bundle.

The Final Result

560ms! That’s 1.6 seconds quicker than the original example.

This bundling technique works for CSS and also JavaScript.. but obviously you cannot mix the two in a single bundle.

Frequently Asked Questions on Faster Page Loads: Bundling CSS and JavaScript

What is the purpose of bundling CSS and JavaScript files?

Bundling CSS and JavaScript files is a technique used to improve the loading speed of a webpage. When a browser loads a webpage, it has to make separate HTTP requests for each CSS and JavaScript file. This can slow down the page load time, especially if there are many files. By bundling these files into one, the browser only needs to make one HTTP request, which can significantly speed up the page load time. This not only enhances the user experience but also improves the website’s SEO ranking as search engines favor faster-loading websites.

How can I bundle my CSS and JavaScript files?

There are several tools available that can help you bundle your CSS and JavaScript files. One popular tool is Webpack, a static module bundler for modern JavaScript applications. When Webpack processes your application, it internally builds a dependency graph which maps every module your project needs and generates one or more bundles. Other tools include Gulp, Grunt, and Browserify. The choice of tool depends on your specific needs and familiarity with the tool.

Can I select elements that have multiple classes in CSS?

Yes, you can select elements that have multiple classes in CSS. This is done by concatenating the class selectors. For example, if you have an element with classes “class1” and “class2”, you can select it using “.class1.class2”. This will select only the elements that have both “class1” and “class2” classes.

What are the potential downsides of bundling CSS and JavaScript files?

While bundling CSS and JavaScript files can improve page load speed, it can also have potential downsides. One downside is that it can increase the file size, which can be a problem for users with slow internet connections. Another downside is that it can make debugging more difficult as it’s harder to identify which file a piece of code comes from when all the code is in one file.

What is the difference between CSS selectors and JavaScript selectors?

CSS selectors are used to select HTML elements based on their id, class, type, attribute, and more. These selectors are then used to apply styles to the selected elements. On the other hand, JavaScript selectors are used to select HTML elements to manipulate them, for example, to change their content or style, or to add event listeners.

How can I make my bundled CSS and JS files smaller?

There are several techniques to make your bundled CSS and JS files smaller. One common technique is minification, which removes unnecessary characters like spaces, line breaks, and comments. Another technique is tree shaking, which removes unused code. These techniques can significantly reduce the size of your bundled files, further improving your page load speed.

How can I debug my bundled CSS and JavaScript files?

Debugging bundled CSS and JavaScript files can be more challenging than debugging individual files. However, most bundling tools provide a feature called source maps that can help with this. A source map is a file that maps the combined code back to the original source files. This allows you to debug the original files as if they were not bundled.

Can I bundle multiple JS and CSS files into a single bundle?

Yes, you can bundle multiple JS and CSS files into a single bundle. This is often done to reduce the number of HTTP requests a browser needs to make when loading a webpage. However, keep in mind that this can increase the size of the bundle, which can be a problem for users with slow internet connections.

What are the different types of CSS selectors?

There are several types of CSS selectors, including element selectors, id selectors, class selectors, attribute selectors, pseudo-class selectors, and pseudo-element selectors. Each type of selector has its own syntax and is used to select different types of elements.

How does bundling CSS and JavaScript files affect SEO?

Bundling CSS and JavaScript files can have a positive impact on SEO. Search engines like Google favor websites that load quickly, and bundling can significantly improve page load speed. However, it’s important to ensure that the bundled files are not too large, as this can slow down page load speed and negatively affect SEO.

Paul is a Rails and PHP developer in the SitePoint group of companies.