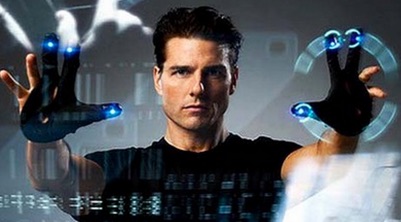

Minority Report (2002) was an unusual movie. Though it was only moderately successful at the time – by Spielberg’s standards anyway – it seemed to have a big influence on techies and UI people.

If you haven’t seen the film, the central character is shown using his ‘electro-gloved’ hands to physically manipulate the user interface on the screens in front of him. Try to imagine Tom Cruise ‘voguing’ in The Matrix and you probably get a sense of it.

Projects quickly sprang up hacking together Kinects and other hardware in an attempt to reproduce this futuristic gestural UI experience.

Funnily enough, if they’d spoken to Cruise they might not have been so keen. Apparently these full-body, UI gestures were so physically demanding that poor old Mr. Cruise needed to take frequent rest breaks during filming. Probably not something you’d want to do all day for your job.

The truth is, usually we want to be able to communicate our intentions with the least possible effort. Direct brain-to-computer interfaces are beginning to become a reality. Siri and Google Voice have become so good we barely think of them.

But another almost effortless UI method has been mostly ignored – till now.

The Birth of the Eye-tracking UI

Eye-tracking is a technology you’re likely familiar with, but we tend to think of as a testing method – and it IS. But it’s now getting serious attention as a user input method.

Rather than simply recording the path of a user’s eyes and later relating that to their behavior, software can now react to a user’s eye movements in real time and update the interface accordingly. That opens up some really cool possibilities.

I thought we’d touch on two hardware projects taking this idea on.

Tobii EyeX Controller

![]()

This rather elegant-looking device is the EyeX Controller from Swedish company Tobii. The unit plugs into your PC (no OSX support at the moment) and tracks your eyes via an infrared camera in the middle of the device.

Tobii offers an SDK for developers and has already integrated EyeX controller support into a selection of Steam games – including Assassin’s Creed Rogue.

In the game, the camera automatically re-centers on your ‘gaze point’, leaving your hands free to control movement and actions. It sounds amazing.

Tobii already offers a line of eye-tracking Assistive Technology (AT) devices called Tobii Dynavox that are designed to help users with motor skill and communication difficulties.

You would think that the addition of a successful consumer-level product would only help their AT products.

The EyeX retails for what seems like a very reasonable 119 € (about $US127).

Fove

![]()

Most of us probably think of Oculus Rift when we think of VR, but Fove takes VR to another level by embedding eye-tracking technology into their headset.

The concept started as a Kickstarter project back in May and almost doubled their $250,000 funding goal – so they certain have some interest.

Predictably they talk about game controllers and assistive technology, but they have some really interesting ideas about game characters reacting to your eye contact – a smile, a frown.

That’s an entirely new aspect of gameplay.

Fove can also change the depth of field based on your focal point, so when you look at your hands in the game, the horizon blurs – and viceversa.

The Fove SDK is available now and the system already supports Windows, OSX, Linux and a selection of gaming engines. And from a pure industrial design point of view, the Fove looks very polished. While I suspect people will always look fairly dorky when wearing VR goggles, this might be as close as we ever come to looking stylish.

![]()

Of course, these are just two of the many eye-tracking systems under development. The car industry is very invested in the idea as it offers an attractive safety feature.

For all the hype about pure ‘VR being the future’, I actually think eye-tracking may be the big trend we didn’t see coming. I wouldn’t be surprised if most new high-end phones and laptops shipped with eye-tracking by 2018.

Although interacting with a computer directly with your eyes seems totally magical to us (it is), to your computer it’s just another stream of screen coordinates, indistinguishable from a mouse or trackpad. That means is tech could work fine with your current software but also enable a whole new set of software ideas.

As cool as VR is, it requires every user to learn new hardware, new software and a whole new paradigm. That will take time.

I can’t see my dad wearing a VR headset any time soon, but a cursor that just follows his eyes? Maybe not such a big jump.

Here’s hoping, we’re working towards a UI future that won’t leave poor old Tom Cruise doubled over.

Originally published in the SitePoint Design Newsletter.

Alex Walker

Alex WalkerAlex has been doing cruel and unusual things to CSS since 2001. He is the lead front-end design and dev for SitePoint and one-time SitePoint's Design and UX editor with over 150+ newsletter written. Co-author of The Principles of Beautiful Web Design. Now Alex is involved in the planning, development, production, and marketing of a huge range of printed and online products and references. He has designed over 60+ of SitePoint's book covers.