Does Google care for SEO? Yes, it does: from Google’s SEO Starter Guide (pdf) to help provided in the Google Webmaster Help Forum, the search engine is pretty transparent when it comes to how it prefers you to optimize your site for inclusion. We’ll be discussing URL structure, TrustRank and duplicate content issues.

Does Google care for SEO? Yes, it does: from Google’s SEO Starter Guide (pdf) to help provided in the Google Webmaster Help Forum, the search engine is pretty transparent when it comes to how it prefers you to optimize your site for inclusion. We’ll be discussing URL structure, TrustRank and duplicate content issues.

To start with a conclusion: if you do what they like, chances are your site will not only included, but also ranked better. And now let’s go “in depth” and see, once and for all, how Google prefers you to optimize your site for its search engine.

All questions in this article were asked by users on Google Moderator Beta, Ask a Google Engineer.

Does Google Really Care About SEO?

The answer is yes, and it comes from Google’s search evangelist Adam Lasnik:

Just like in any industry, there are outstanding SEOs and bad apples. We Googlers are delighted when people make their sites more accessible to users and Googlebot, whether they do the work themselves or hire ethical and effective professionals to help them out.

Please note that the “Googlers are delighted” when sites are optimized for search. The moral: know your SEO!

What Is the URL structure preferred by Google?

Google’s Matt Cuts replied:

I would recommend

long-haired-dogs.html

long_haired_dogs.html

longhaireddogs.html

in that order. If your site is already live on the web, it’s probably not worth going back to change from one method to another, but if you’re just starting a new site, I’d probably choose the URLs in that order of preference. I can only speak for Google; you’ll need to run your own tests to see what works best with Microsoft, Yahoo, and Ask.

Is Google Using a “TrustRank” algorithm?

Many SEOs suggest that if a bad neighbor site links to yours, your site’s “trustrank” with Google will be lowered.

False. If this were true, any competitor could harm a site, just for spite. Google doesn’t use “TrustRank” to refer to anything, although the company did have an attempt to trademark “TrustRank” as a term for an anti-phishing filter. Google abandoned this trademark in 2008. The only company that might use “TrustRank” is Yahoo!

Matt Cutts offers an explanation about how a competitor can hurt another competitor:

We try very hard to make it hard for one competitor to hurt another competitor. (We don’t claim that it’s impossible, because for example someone could steal your domain, either by identity theft or by hacking into a domain, and then do bad things on the domain.) But we try hard to keep one competitor from hurting another competitor in our ranking.

Will Google Learn How to Identify Paid Links without Making Webmasters use “nofollow”?

The answer is yes. Of course, we know that one of the techniques is to ask users to “report paid links” but Google also depends on its algorithms. According to Matt Cutts:

We definitely have worked to improve our paid-link and junk link detection algorithms. In our most recent PageRank update (9/27/2008) for example, there are some differences in PageRank because we’ve improved how we treat links, for example.

The “nofollow” attribute on links is a granular way that site owners can provide more information to search engines about their links, but search engines absolutely continue to innovate on how we weigh links as well.

My Site’s Been Penalized Because Of Duplicate Content!

Right, this is a tough one, and it is not answered by any Google engineer at Google Moderator beta, but, Adam Lasnik did provide a link to an answer by Google’s Susan Moskwa, webmaster trends analyst: Demystifying the “duplicate content penalty”

The answer to “does Google penalize duplicate content” is a “yes and no.” To better understand how this works, we need first to “define” duplicate content in Google terms.

Usually, duplicate content refers to blocks of content that either completely match other content or are appreciably similar. There are two types of such occurrences: within-your-domain-duplicate-content and cross-domain-duplicate-content.

Within-your-domain-duplicate-content

Google filters duplicate content in different ways. If your site has a regular and a print version of each page, and none is blocked in robots.txt or with a “noindex” meta tag, Google will simply choose one to list and eliminate the other.

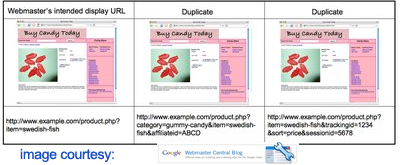

Ecommerce sites, which are usually managed with CMS, may sometimes store items shown (and — worse yet — linked) via multiple distinct URLs. Google will group the duplicate URLs in clusters and display the one it considers best in the search results.

The real negative effects of having duplicate content on multiple URLs are:

- Diluting link popularity (instead of getting links to the intended display URL, the links may be divided among distinct URLs)

- Search results may display “user-unfriendly URLs” (long URLs with tracking IDs, etc), which is bad for “site branding”

The “duplicate content penalty” applies when content is deliberated duplicated to manipulate SERPs or win more traffic – in such cases Google will make adjustments in the indexing and ranking of the sites involved.

Cross-domain-duplicate-content

This is the nightmare of all serious Web publishers: content scraping. Someone could be scraping content to use on “made for AdSense” sites for example. Or some web proxies could index part of the site they access through the proxy. Google says this is not a reason to worry, although many publishers experienced “penalties” when the scraper ranked higher than the original.

Google states that they are able to determine the original. Apparently if the scraper ranks higher the reasons might lay in the way the “victim” site is “prepared” for Google. Here are the possible “remedies” suggested by Sven Naumann, from Google’s search quality team:

- Check if your content is still accessible to our crawlers. You might unintentionally have blocked access to parts of your content in your robots.txt file.

- You can look in your Sitemap file to see if you made changes for the particular content which has been scraped.

- Check if your site is in line with our webmaster guidelines.

The most interesting statement comes from the same Google search expert. How would you “translate” the following:

To conclude, I’d like to point out that in the majority of cases, having duplicate content does not have negative effects on your site’s presence in the Google index. It simply gets filtered out.

Let me offer you my version: if your site gets scraped by a site with higher popularity, your original content will simply get filtered out. If this is not “duplicate content penalty”, then what should we call it? Perhaps eradication? (!)

Luckily Google does offer a solution: if your content is scraped and the scraper ranks higher, you can file a DMCA request to claim ownership of the content. Good luck with that!

Last but not least: when you syndicate content on other sites, if you want the original article to rank you have to make sure that these sites include a link back to it. We’ve seen cases when, despite the link, the original was given less weight and… uhm… eradicated?

For affiliate sites that need to display duplicate or similar content with the „mother“ site, the solution proposed by Google is also simple: copying content for a site will cause Google to „omit“ your site from the results, but if you add extra value to your pages (more content) you have good chances of ranking well in the SERPs. So how much is more? One paragraph, two? Go figure!

My second conclusion: what can you take from this article? Maybe even Google doesn’t know what it wants – the algorithms are in total control – and it doesn’t want humans to know what it’s doing. (!) Seriously, Google likes optimized sites, and your best bet is to never duplicate content. Period.