Introduction to Cloud Computing and AWS

PART I

The Core AWS Services

The cloud is where much of the serious technology innovation and growth happens these days, and Amazon Web Services (AWS), more than any other, is the platform of choice for business and institutional workloads. If you want to be successful as an AWS solutions architect, you’ll first need to make sure you understand what the cloud really is and how Amazon’s end of it works.

To make sure you’ve got the big picture, this chapter will explore the basics:

- What makes cloud computing different from other applications and client-server models

- How the AWS platform provides secure and flexible virtual networked environments for your resources

- How AWS provides such a high level of service reliability

- How to access and manage your AWS-based resources

- Where you can go for documentation and help with your AWS deployments

Cloud Computing and Virtualization

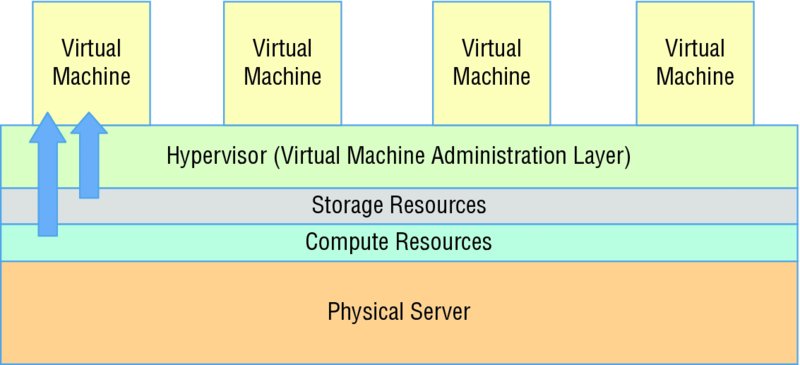

The technology that lies at the core of all cloud operations is virtualization. As illustrated in Figure 1.1, virtualization lets you divide the hardware resources of a single physical server into smaller units. That physical server could therefore host multiple virtual machines running their own complete operating systems, each with its own memory, storage, and network access.

Figure 1.1 A virtual machine host

Virtualization’s flexibility makes it possible to provision a virtual server in a matter of seconds, run it for exactly the time your project requires, and then shut it down. The resources released will become instantly available to other workloads. The usage density you can achieve lets you squeeze the greatest value from your hardware and makes it easy to generate experimental and sandboxed environments.

Cloud Computing Architecture

Major cloud providers like AWS have enormous server farms where hundreds of thousands of servers and data drives are maintained along with the network cabling necessary to connect them. A well-built virtualized environment could provide a virtual server using storage, memory, compute cycles, and network bandwidth collected from the most efficient mix of available sources it can find.

A cloud computing platform offers on-demand, self-service access to pooled compute resources where your usage is metered and billed according to the volume you consume. Cloud computing systems allow for precise billing models, sometimes involving fractions of a penny for an hour of consumption.

Cloud Computing Optimization

The cloud is a great choice for so many serious workloads because it’s scalable, elastic, and, often, a lot cheaper than traditional alternatives. Effective deployment provisioning will require some insight into those three features.

Scalability

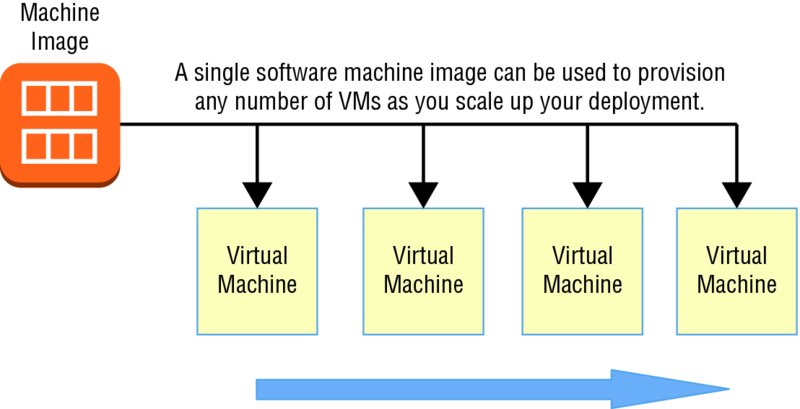

A scalable infrastructure can efficiently meet unexpected increases in demand for your application by automatically adding resources. As Figure 1.2 shows, this most often means dynamically increasing the number of virtual machines (or instances as AWS calls them) you’ve got running.

Figure 1.2 Copies of a machine image are added to new VMs as they’re launched.

AWS offers its autoscaling service through which you define a machine image that can be instantly and automatically replicated and launched into multiple instances to meet demand.

Elasticity

The principle of elasticity covers some of the same ground as scalability—both address managing changing demand. However, while the images used in a scalable environment let you ramp up capacity to meet rising demand, an elastic infrastructure will automatically reduce capacity when demand drops. This makes it possible to control costs, since you’ll run resources only when they’re needed.

Cost Management

Besides the ability to control expenses by closely managing the resources you use, cloud computing transitions your IT spending from a capital expenditure (capex) framework into something closer to operational expenditure (opex).

In practical terms, this means you no longer have to spend $10,000 up front for every new server you deploy—along with associated electricity, cooling, security, and rack space costs. Instead, you’re billed much smaller incremental amounts for as long as your application runs.

That doesn’t necessarily mean your long-term cloud-based opex costs will always be less than you’d pay over the lifetime of a comparable data center deployment. But it does mean you won’t have to expose yourself to risky speculation about your long-term needs. If, sometime in the future, changing demand calls for new hardware, AWS will be able to deliver it within a minute or two.

To help you understand the full implications of cloud compute spending, AWS provides a free Total Cost of Ownership (TCO) Calculator at https://aws.amazon.com/tco-calculator/. This calculator helps you perform proper “apples-to-apples” comparisons between your current data center costs and what an identical operation would cost you on AWS.

The AWS Cloud

Keeping up with the steady stream of innovative new services showing up on the AWS Console can be frustrating. But as a solutions architect, your main focus should be on the core service categories. This section briefly summarizes each of the core categories (as shown in Table 1.1) and then does the same for key individual services. You’ll learn much more about all of these (and more) services through the rest of the book, but it’s worth focusing on these short definitions, as they lie at the foundation of everything else you’re going to learn.

Table 1.1AWS service categories

| Category | Function |

| Compute | Services replicating the traditional role of local physical servers for the cloud, offering advanced configurations including autoscaling, load balancing, and even serverless architectures (a method for delivering server functionality with a very small footprint) |

| Networking | Application connectivity, access control, and enhanced remote connections |

| Storage | Various kinds of storage platforms designed to fit a range of both immediate accessibility and long-term backup needs |

| Database | Managed data solutions for use cases requiring multiple data formats: relational, NoSQL, or caching |

| Application management | Monitoring, auditing, and configuring AWS account services and running resources |

| Security and identity | Services for managing authentication and authorization, data and connection encryption, and integration with third-party authentication management systems |

| Application integration | Tools for designing loosely coupled, integrated, and API-friendly application development processes |

Table 1.2 describes the functions of some core AWS services, organized by category.

Table 1.2Core AWS services (by category)

| Category | Service | Function |

| Compute | Elastic Compute Cloud (EC2) | EC2 server instances provide virtual versions of the servers you would run in your local data center. EC2 instances can be provisioned with the CPU, memory, storage, and network interface profile to meet any application need, from a simple web server to one part of a cluster of instances providing an integrated multitiered fleet architecture. Since EC2 instances are virtual, they’re much more resource-efficient and deploy nearly instantly. |

| Lambda | Serverless application architectures like the one provided by Amazon’s Lambda service allow you to provide responsive public-facing services without the need for a server that’s actually running 24/7. Instead, network events (like consumer requests) can trigger the execution of a predefined code-based operation. When the operation (which can currently run for as long as 15 minutes) is complete, the Lambda event ends, and all resources automatically shut down. | |

| Auto Scaling | Copies of running EC2 instances can be defined as image templates and automatically launched (or scaled up) when client demand can’t be met by existing instances. As demand drops, unused instances can be terminated (or scaled down). | |

| Elastic Load Balancing | Incoming network traffic can be directed between multiple web servers to ensure that a single web server isn’t overwhelmed while other servers are underused or that traffic isn’t directed to failed servers. | |

| Elastic Beanstalk | Beanstalk is a managed service that abstracts the provisioning of AWS compute and networking infrastructure. You are required to do nothing more than push your application code, and Beanstalk automatically launches and manages all the necessary services in the background. | |

| Networking | Virtual Private Cloud (VPC) | VPCs are highly configurable networking environments designed to host your EC2 (and RDS) instances. You use VPC-based tools to closely control inbound and outbound network access to and between instances. |

| Direct Connect | By purchasing fast and secure network connections to AWS through a third-party provider, you can use Direct Connect to establish an enhanced direct tunnel between your local data center or office and your AWS-based VPCs. | |

| Route 53 | Route 53 is the AWS DNS service that lets you manage domain registration, record administration, routing protocols, and health checks, which are all fully integrated with the rest of your AWS resources | |

| CloudFront | CloudFront is Amazon’s distributed global content delivery network (CDN). When properly configured, a CloudFront distribution can store cached versions of your site’s content at edge locations around the world so they can be delivered to customers on request with the greatest efficiency and lowest latency. | |

| Storage | Simple Storage Service (S3) | S3 offers highly versatile, reliable, and inexpensive object storage that’s great for data storage and backups. It’s also commonly used as part of larger AWS production processes, including through the storage of script, template, and log files. |

| Glacier | A good choice for when you need large data archives stored cheaply over the long term and can live with retrieval delays measuring in the hours. Glacier’s lifecycle management is closely integrated with S3. | |

| Elastic Block Store (EBS) | EBS provides the virtual data drives that host the operating systems and working data of an EC2 instance. They’re meant to mimic the function of the storage drives and partitions attached to physical servers. | |

| Storage Gateway | Storage Gateway is a hybrid storage system that exposes AWS cloud storage as a local, on-premises appliance. Storage Gateway can be a great tool for migration and data backup and as part of disaster recovery operations. | |

| Database | Relational Database Service (RDS) | RDS is a managed service that builds you a stable, secure, and reliable database instance. You can run a variety of SQL database engines on RDS, including MySQL, Microsoft SQL Server, Oracle, and Amazon’s own Aurora. |

| DynamoDB | DynamoDB can be used for fast, flexible, highly scalable, and managed nonrelational (NoSQL) database workloads. | |

| Application management | CloudWatch | No deployment is complete without some kind of ongoing monitoring in place. And generating endless log files doesn’t make much sense if there’s no one keeping an eye on them. CloudWatch can be set to monitor process performance and utilization through events and, when preset thresholds are met, either send you a message or trigger an automated response. |

| CloudFormation | This service enables you to use template files to define full and complex AWS deployments. The ability to script your use of any AWS resources makes it easier and more attractive to automate, standardizing and speeding up the application launch process. | |

| CloudTrail | CloudTrail collects records of all your account’s API events. This history is useful for account auditing and troubleshooting purposes. | |

| Config | The Config service is designed to help you with change management and compliance for your AWS account. You first define a desired configuration state, and Config will get to work evaluating any future states against that ideal. When a configuration change pushes too far from the ideal baseline, you’ll be notified. | |

| Security and identity | Identity and Access Management (IAM) | You use IAM to administrate user and programmatic access and authentication to your AWS account. Through the use of users, groups, roles, and policies, you can control exactly who and what can access and/or work with any of your AWS resources. |

| Key Management Service (KMS) | KMS is a managed service that allows you to administrate the creation and use of encryption keys to secure data used by and for any of your AWS resources. | |

| Directory Service | For AWS environments that need to manage identities and relationships, Directory Service can integrate AWS resources with identity providers like Amazon Cognito and Microsoft AD domains. | |

| Application integration | Simple Notification Service (SNS) | SNS is a notification tool that can automate the publishing of alert topics to other services (to an SQS Queue or to trigger a Lambda function, for instance), to mobile devices, or to recipients using email or SMS. |

| Simple WorkFlow (SWF) | SWF lets you coordinate a series of tasks that must be performed using a range of AWS services or even nondigital (meaning, human) events. SWF can be the “glue” and “lubrication” that both speed a complex process and keep all the moving parts from falling apart. | |

| Simple Queue Service (SQS) | SQS allows for event-driven messaging within distributed systems that can decouple while coordinating the discrete steps of a larger process. The data contained in your SQS messages will be reliably delivered, adding to the fault-tolerant qualities of an application. | |

| API Gateway | This service enables you to create and manage secure and reliable APIs for your AWS-based applications. |

AWS Platform Architecture

AWS maintains data centers for its physical servers around the world. Because the centers are so widely distributed, you can reduce your own services’ network transfer latency by hosting your workloads geographically close to your users. It can also help you manage compliance with regulations requiring you to keep data within a particular legal jurisdiction.

Data centers exist within AWS regions, of which there are currently 17—not including private U.S. government AWS GovCloud regions—although this number is constantly growing. It’s important to always be conscious of the particular region you have selected when you launch new AWS resources, as pricing and service availability can vary from one to the next. Table 1.3 shows a list of all 17 (nongovernment) regions along with each region’s name and core endpoint addresses.

Table 1.3A list of publicly accessible AWS regions

| Region Name | Region | Endpoint |

| US East (Ohio) | us-east-2 | us-east-2.amazonaws.com |

| US East (N. Virginia) | us-east-1 | us-east-1.amazonaws.com |

| US West (N. California) | us-west-1 | us-west-1.amazonaws.com |

| US West (Oregon) | us-west-2 | us-west-2.amazonaws.com |

| Asia Pacific (Mumbai) | ap-south-1 | ap-south-1.amazonaws.com |

| Asia Pacific (Seoul) | ap-northeast-2 | ap-northeast-2.amazonaws.com |

| Asia Pacific (Osaka-Local) | ap-northeast-3 | ap-northeast-3.amazonaws.com |

| Asia Pacific (Singapore) | ap-southeast-1 | ap-southeast-1.amazonaws.com |

| Asia Pacific (Sydney) | ap-southeast-2 | ap-southeast-2.amazonaws.com |

| Asia Pacific (Tokyo) | ap-northeast-1 | ap-northeast-1.amazonaws.com |

| Canada (Central) | ca-central-1 | ca-central-1.amazonaws.com |

| China (Beijing) | cn-north-1 | cn-north-1.amazonaws.com.cn |

| EU (Frankfurt) | eu-central-1 | eu-central-1.amazonaws.com |

| EU (Ireland) | eu-west-1 | eu-west-1.amazonaws.com |

| EU (London) | eu-west-2 | eu-west-2.amazonaws.com |

| EU (Paris) | eu-west-3 | eu-west-3.amazonaws.com |

| South America (São Paulo) | sa-east-1 | sa-east-1.amazonaws.com |

Tip

Endpoint addresses are used to access your AWS resources remotely from within application code or scripts. Prefixes like ec2, apigateway, or cloudformation are often added to the endpoints to specify a particular AWS service. Such an address might look like this: cloudformation .us-east-2.amazonaws.com. You can see a complete list of endpoint addresses and their prefixes at https://docs.aws.amazon.com/general/latest/gr/rande.html#ec2_region.

Because low-latency access is so important, certain AWS services are offered from designated edge network locations. These services include Amazon CloudFront, Amazon Route 53, AWS Firewall Manager, AWS Shield, and AWS WAF. For a complete and up-to-date list of available locations, see https://aws.amazon.com/about-aws/global-infrastructure/regional-product-services/.

Physical AWS data centers are exposed within your AWS account as availability zones. There might be half a dozen availability zones within a region, identified using names like us-east-1a.

You organize your resources from a particular region within one or more virtual private clouds (VPCs). A VPC is effectively a network address space within which you can create network subnets and associate them with particular availability zones. When configured properly, this architecture can provide effective resource isolation and durable replication.

AWS Reliability and Compliance

AWS has a lot of the basic regulatory, legal, and security groundwork covered before you even launch your first service.

AWS has invested significant planning and funds into resources and expertise relating to infrastructure administration. Its heavily protected and secretive data centers, layers of redundancy, and carefully developed best-practice protocols would be difficult or even impossible for a regular enterprise to replicate.

Where applicable, resources on the AWS platform are compliant with dozens of national and international standards, frameworks, and certifications, including ISO 9001, FedRAMP, NIST, and GDPR. (See https://aws.amazon.com/compliance/programs/ for more information.)

The AWS Shared Responsibility Model

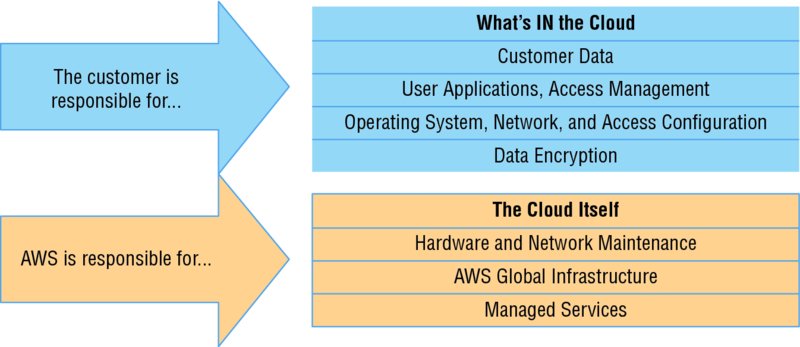

Of course, those guarantees cover only the underlying AWS platform. The way you decide to use AWS resources is your business—and therefore your responsibility. So, it’s important to be familiar with the AWS Shared Responsibility Model.

AWS guarantees the secure and uninterrupted operation of its “cloud.” That means its physical servers, storage devices, networking infrastructure, and managed services. AWS customers, as illustrated in Figure 1.3, are responsible for whatever happens within that cloud. This covers the security and operation of installed operating systems, client-side data, the movement of data across networks, end-user authentication and access, and customer data.

Figure 1.3 The AWS Shared Responsibility Model

The AWS Service Level Agreement

By “guarantee,” AWS doesn’t mean that service disruptions or security breaches will never occur. Drives may stop spinning, major electricity systems may fail, and natural disasters may happen. But when something does go wrong, AWS will provide service credits to reimburse customers for their direct losses whenever uptimes fall below a defined threshold. Of course, that won’t help you recover customer confidence or lost business.

The exact percentage of the guarantee will differ according to service. The service level agreement (SLA) rate for AWS EC2, for instance, is set to 99.99 percent—meaning that you can expect your EC2 instances, ECS containers, and EBS storage devices to be available for all but around four minutes of each month.

The important thing to remember is that it’s not if things will fail but when. Build your applications to be geographically dispersed and fault tolerant so that when things do break, your users will barely notice.

Working with AWS

Whatever AWS services you choose to run, you’ll need a way to manage them all. The browser-based management console is an excellent way to introduce yourself to a service’s features and to how it will perform in the real world. There are few AWS administration tasks that you can’t do from the console, which provides plenty of useful visualizations and helpful documentation. But as you become more familiar with the way things work, and especially as your AWS deployments become more complex, you’ll probably find yourself getting more of your serious work done away from the console.

The AWS CLI

The AWS Command Line Interface (CLI) lets you run complex AWS operations from your local command line. Once you get the hang of how it works, you’ll discover that it can make things much simpler and more efficient.

As an example, suppose you need to launch a half-dozen EC2 instances to make up a microservices environment. Each instance is meant to play a separate role and therefore will require a subtly different provisioning process. Clicking through window after window to launch the instances from the console can quickly become tedious and time-consuming, especially if you find yourself repeating the task every few days. But the whole process can alternatively be incorporated into a simple script that you can run from your local terminal shell or PowerShell interface using the AWS CLI.

Installing and configuring the AWS CLI on Linux, Windows, or macOS machines is not hard at all, but the details might change depending on your platform. For the most up-to-date instructions, see https://docs.aws.amazon.com/cli/latest/userguide/installing.html.

AWS SDKs

If you want to incorporate access to your AWS resources into your application code, you’ll need to use an AWS software development kit (SDK) for the language you’re working with. AWS currently offers SDKs for nine languages including Java, .NET, and Python, and a number of mobile SDKs that include Android and iOS. There are also toolkits available for Eclipse, Visual Studio, and VSTS.

You can see a full overview of AWS developer tools at https://aws.amazon.com/tools/.

Technical Support and Online Resources

Things won’t always go smoothly for you—on AWS just as everywhere else in your life. Sooner or later, you’ll need some kind of technical or account support. There’s a variety of types of support, and you should understand what’s available.

One of the first things you’ll be asked to decide when you create a new AWS account is which support plan you’d like. Your business needs and budget will determine the way you answer this question.

Support Plans

The Basic plan is free with every account and gives you access to customer service, along with documentation, white papers, and the support forum. Customer service covers billing and account support issues.

The Developer plan starts at $29/month and adds access for one account holder to a Cloud Support associate along with limited general guidance and “system impaired” response.

For $100/month (and up), the Business plan will deliver faster guaranteed response times to unlimited users for help with “impaired” systems, personal guidance and troubleshooting, and a support API.

Finally, Enterprise support plans cover all of the other features, plus direct access to AWS solutions architects for operational and design reviews, your own technical account manager, and something called a support concierge. For complex, mission-critical deployments, those benefits can make a big difference. But they’ll cost you at least $15,000 each month.

You can read more about AWS support plans at https://aws.amazon.com/premiumsupport/compare-plans/.

Other Support Resources

There’s plenty of self-serve support available outside of the official support plans.

- AWS community help forums are open to anyone with a valid AWS account (

https://forums.aws.amazon.com). - Extensive and well-maintained AWS documentation is available at

https://aws.amazon.com/documentation/. - The AWS Well-Architected page (

https://aws.amazon.com/architecture/well-architected/) is a hub that links to some valuable white papers and documentation addressing best practices for cloud deployment design.

Summary

Cloud computing is built on the ability to efficiently divide physical resources into smaller but flexible virtual units. Those units can be “rented” by businesses on a pay-as-you-go basis and used to satisfy just about any networked application and/or workflow’s needs in an affordable, scalable, and elastic way.

Amazon Web Services provides reliable and secure resources that are replicated and globally distributed across a growing number of regions and availability zones. AWS infrastructure is designed to be compliant with best-practice and regulatory standards—although the Shared Responsibility Model leaves you in charge of what you place within the cloud.

The growing family of AWS services covers just about any digital needs you can imagine, with core services addressing compute, networking, database, storage, security, and application management and integration needs.

You manage your AWS resources from the management console, with the AWS CLI, or through code generated using an AWS SDK.

Technical and account support is available through support plans and through documentation and help forums. AWS also makes white papers and user and developer guides available for free as Kindle books. You can access them at this address:

https://www.amazon.com/default/e/B007R6MVQ6/ref=dp_byline_cont_ebooks_1?redirectedFromKindleDbs=true

Exam Essentials

Understand AWS platform architecture. AWS divides its servers and storage devices into globally distributed regions and, within regions, into availability zones. These divisions permit replication to enhance availability but also permit process and resource isolation for security and compliance purposes. You should design your own deployments in ways that take advantage of those features.

Understand how to use AWS administration tools. While you will use your browser to access the AWS administration console at least from time to time, most of your serious work will probably happen through the AWS CLI and, from within your application code, through an AWS SDK.

Understand how to choose a support plan. Understanding which support plan level is right for a particular customer is an important element in building a successful deployment. Become familiar with the various options.

Exercise 1.1

Use the AWS CLI

Install (if necessary) and configure the AWS CLI on your local system and demonstrate it’s running properly by listing the buckets that currently exist in your account. For extra marks, create an S3 bucket and then copy a simple file or document from your machine to your new bucket. From the browser console, confirm that the file reached its target.

To get you started, here are some basic CLI commands:

aws s3 lsaws s3 mb <bucketname>aws s3 cp /path/to/file.txt s3://bucketnameReview Questions

Your developers want to run fully provisioned EC2 instances to support their application code deployments but prefer not to have to worry about manually configuring and launching the necessary infrastructure. Which of the following should they use?

- AWS Lambda

- AWS Elastic Beanstalk

- Amazon EC2 Auto Scaling

- Amazon Route 53

Which service would you use to most effectively reduce the latency your end users experience when accessing your application resources over the Internet?

- Amazon CloudFront

- Amazon Route 53

- Elastic Load Balancing

- Amazon Glacier

Which of the following is the best use-case scenario for Elastic Block Store?

- You need a cheap and reliable place to store files your application can access.

- You need a safe place to store backup archives from your local servers.

- You need a source for on-demand compute cycles to meet fluctuating demand for your application.

- You need persistent storage for the file system run by your EC2 instance.

Which of the following is the best tool to control access to your AWS services and administration console?

- AWS Identity and Access Management (IAM)

- Key Management Service (KMS)

- AWS Directory Service

- Simple WorkFlow (SWF)

For data workloads requiring more speed and flexibility than a closely defined structure offers, which service should you choose?

- Relational Database Service (RDS)

- Amazon Aurora

- Amazon DynamoDB

- Key Management Service (KMS)

Which of the following is the correct endpoint address for an EC2 instance running in the AWS Ireland region?

compute.eu-central-1.amazonaws.comec2.eu-central-1.amazonaws.comelasticcomputecloud.eu-west-2.amazonaws.comec2.eu-west-1.amazonaws.com

What does the term availability zone refer to in AWS documentation?

- One or more isolated physical data centers within an AWS region

- All the hardware resources within a single region

- A single network subnet used by resources within a single region

- A single isolated server room within a data center

Which AWS tool lets you organize your EC2 instances and configure their network connectivity and access control?

- Load Balancing

- Amazon Virtual Private Cloud (VPC)

- Amazon CloudFront

- AWS endpoints

You want to be sure that the application you’re building using EC2 and S3 resources will be reliable enough to meet the regulatory standards required within your industry. What should you check?

- Historical uptime log records

- The AWS Program Compliance Tool

- The AWS service level agreement (SLA)

- The AWS Shared Responsibility Model

Which of the following would you use to administrate your AWS infrastructure via your local command line or shell scripts?

- AWS Config

- AWS CLI

- AWS SDK

- The AWS Console

Your company needs direct access to AWS support for both development and IT team leaders. Which support plan should you purchase?

- Business

- Developer

- Basic

- Enterprise