On April 24, Google introduced its Penguin update — a change to its algorithm that attempted to rectify issues associated with websites rich in low-quality links. These low-quality links include sponsored links, links referring back to unrelated websites, and those that refer back to sites whose anchor text repeat the same keywords, for example. In essence, the Penguin update scrutinizes websites’ link profiles. A very laudable aim indeed, but the same update also makes the performance of negative SEO — it’s as bad as it sounds! — a much less challenging exercise for the unscrupulous.

What is negative SEO?

Through underhanded methods, a website’s ranking declines after someone, usually a competitor, makes it seem as if your perfectly legitimate site is in violation of Google’s quality guidelines. They do this by creating low-quality links pointing to your site, creating duplicate content of your site or hacking into your site and inserting malicious code. Negative SEO is a “black hat” technique that every site owner should be aware of.

The main techniques for negative SEO are link spamming, content manipulation, and site intrusions. If someone is bent on link spamming your site, they’ll set up a significant number of low-quality links and point them to you. The set-up makes it very likely that Google will notice the unnatural links and send out a spam alert that’s certain to kill your ranking. On the other hand, if the method of choice is content manipulation, then your site’s content will be copied again and again until Google punishes you for possessing duplicate content. Come again? Yes, even though you didn’t copy your own content, if others copy it, even without your permission, your site may be deemed in violation of Google’s rule prohibiting duplicate content. Intrusion, lastly, refers to hacking or malware introduction. All these methods have one goal: to demote your site. Let’s look at each of these techniques in more detail.

Link Spamming

The Penguin algorithm checks sites’ ratio of low-quality links to high-quality ones; if the ratio is unfavorable your site gets penalized. It’s important to keep in mind that Penguin is not run in real time. That means that if you get caught in its wake, even getting rid of the spam immediately is no guarantor that you’ll be back on top in no time; it could take weeks, in fact. To effectively topple a site’s ratio of good to bad links, an attacker must produce hundreds of negative links — not a light undertaking by any means, but also, not impossible.

Here are some examples of what Google may consider low-quality links:

- Links from sites with too many outgoing links

- Links from low-quality blog networks

- Links from sites with too many consecutive links (like sponsored links)

- Too high a number of sitewide links

- Too many links from sites with low Google PageRank

- Too many links from sites with related IP addresses

- The use of a certain keyword too many times in a link anchor text

- Links from sites with a subject that is irrelevant to your own site

- Links from link exchange-type pages

- Links from low-quality directories

- Links from footers

- Links from blog and forum comments

The best advice is to keep a healthy and robust backlink profile and to stay away from manipulative link-building. Ideally, your site will link to quality sites, have non-repetitive anchor text, and links that are diverse. Periodically reviewing your link profile is also good practice; it will give you the opportunity to identify and remove spam links. Since Yahoo’s Site Explorer went to the dustbin of internet history and Google provides nary a seam of good backlink data, third-party tools are your best bet. For backlink reviews, try SEOMoz’s OpenSiteExplorer.com and Majestic SEO — both have free versions and are highly recommended. These tools will only show you your backlinks, but to analyze what type of links you have either do it manually or you can take the backlink data from a CSV file and input them in Link Detective, which will break down the types of links, such as blog comment links, footer links, etc.

Sites that feel they were unfairly affected by the Penguin update can contact Google for a reinclusion.

Content Manipulation

When duplicate content is present on the web, Google’s algorithm takes note, and, usually, reserves a good ranking for a single version while dispensing poorer rankings to the rest. The site where the content was originally posted can be expected to get the better ranking — but it doesn’t always play out that way.

Such a site — one whose original content is duplicated by others — might get a ranking that’s lower than that of its copycats if Google indexes the duplicate content before that of the original site. Similarly, sites whose PageRank is higher might also be favored by Google’s algorithm. Beware of brazen reposting of your content; the more it’s copied, the more likely your site is to be penalized.

Content copying is often done by scraping software; automated software that will copy your content and post it on other websites. Spammers use scraping software to quickly create thousands of pages to gain quick ranking. They often copy content from high-ranking websites, in hopes that their sites will also rank high.

In early 2011 Google released the Panda update. Panda is similar to Penguin, in that it filters out all the spammy pages, but instead of targeting low-quality links, it targets low-quality content (ie scraped and duplicate content). Keyword stuffing is another thing it targets, which is basically targeting sites that use too many keyword repetitions on their pages.

How do you protect your site against this type of negative SEO? By frequently checking for duplicate content. One way to check for it is to use Copyscape.com, or another similar service. But lifting a unique sentence from your site and googling for it (don’t forget to use quotation marks) also works. For the latter, choose a sentence that’s five to 15 words long. That one or two sentences of your content have been copied usually poses no risk, but if entire content sections have been duplicated, you have grave cause for concern.

If your duplicate content check turns up just a few sites, contact each one of those site owners and request that the copied material be removed; or send a cease-and-desist letter. When these tactics prove unproductive, a DMCA (Digital Millennium Copyright Act) complaint filing is in order.

Google has a reporting tool that can help you deal with web search problems. You can resort to it if problematic material surfaces in places like Google Plus pages or YouTube. However, when the volume of duplicates is too overwhelming, the most straightforward method to overcome its ill effects is to conduct a thorough content rewrite.

One last word to the wise: keep an eye on the content “borrowing” of affiliates. If an affiliate program is part of your business strategy, making sure that pre-designated, pertinent content about your business is available for their use can be a winning solution. That content should be related to your business but be separate and distinct from what appears on your own website. The overarching idea, here, is to update your content regularly in order to avoid being a victim of negative SEO. Updating your content monthly and staying close to Google’s good-quality content guidelines will keep you on the right track.

Site Intrusion

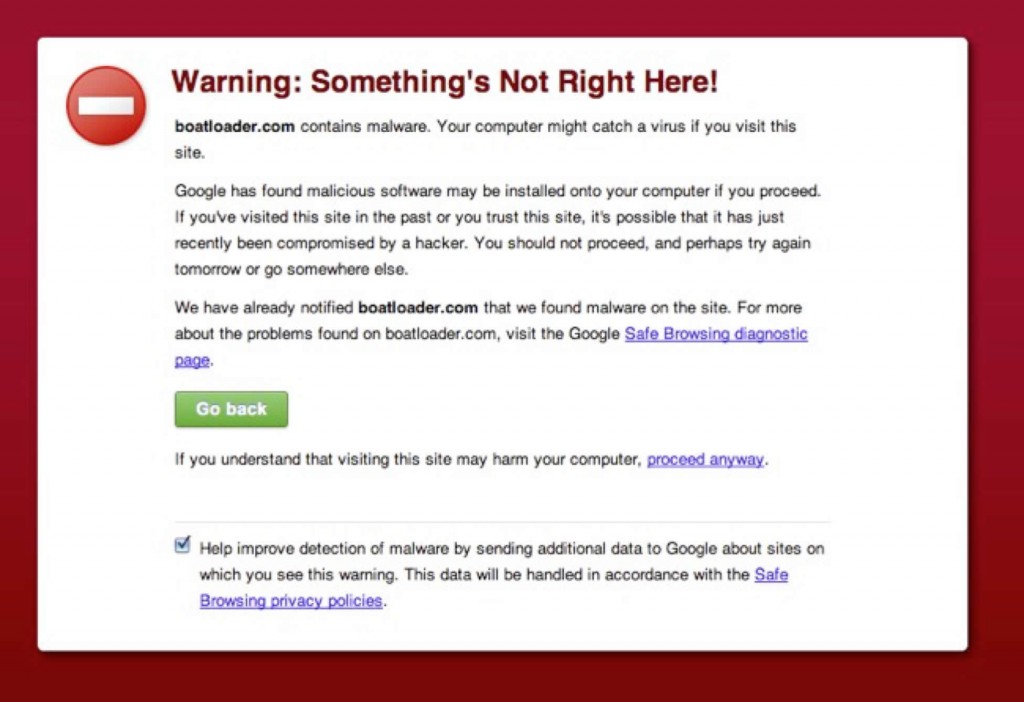

A web server hacking or the implantation of malware constitutes site intrusion. If malicious code is entered into your HTML source code by hackers, your site can be delisted by Google, which has no trouble detecting malicious code. You probably would not know this code was inserted into your site, until after Google alerts you via email in your Webmaster Tools account or someone alerts you that they saw a notice from Google about your site containing malicious code.

To avoid this scenario, your web server should be kept up to date. Do that by installing patches as they’re released, and watching out for foreign code. Free tools are available online that can help you with all this. After you’ve purged the malicious code, it can take Google days or weeks to reindex your site — how long it takes will depend on your site’s popularity, but to speed it up you can use Lindexed, which uses several indexing techniques to speed up the process.

If you don’t have a Webmaster Tools account, make sure to sign up and enroll your site, since Google may use it to communicate site removals.

Pierre Zarokian is the CEO of Submit Express, a leading search engine marketing firm since 1998 which offers social media marketing services via iClimber. Submit Express was ranked as the #1 SEO Firm by Website Magazine in 2009. They are also a three-time Inc5000 and two-time FAST 500 company. Pierre started his career in search marketing in the mid-90s. He has written many articles on the subject of SEO and social media and often speaks at industry trade shows.